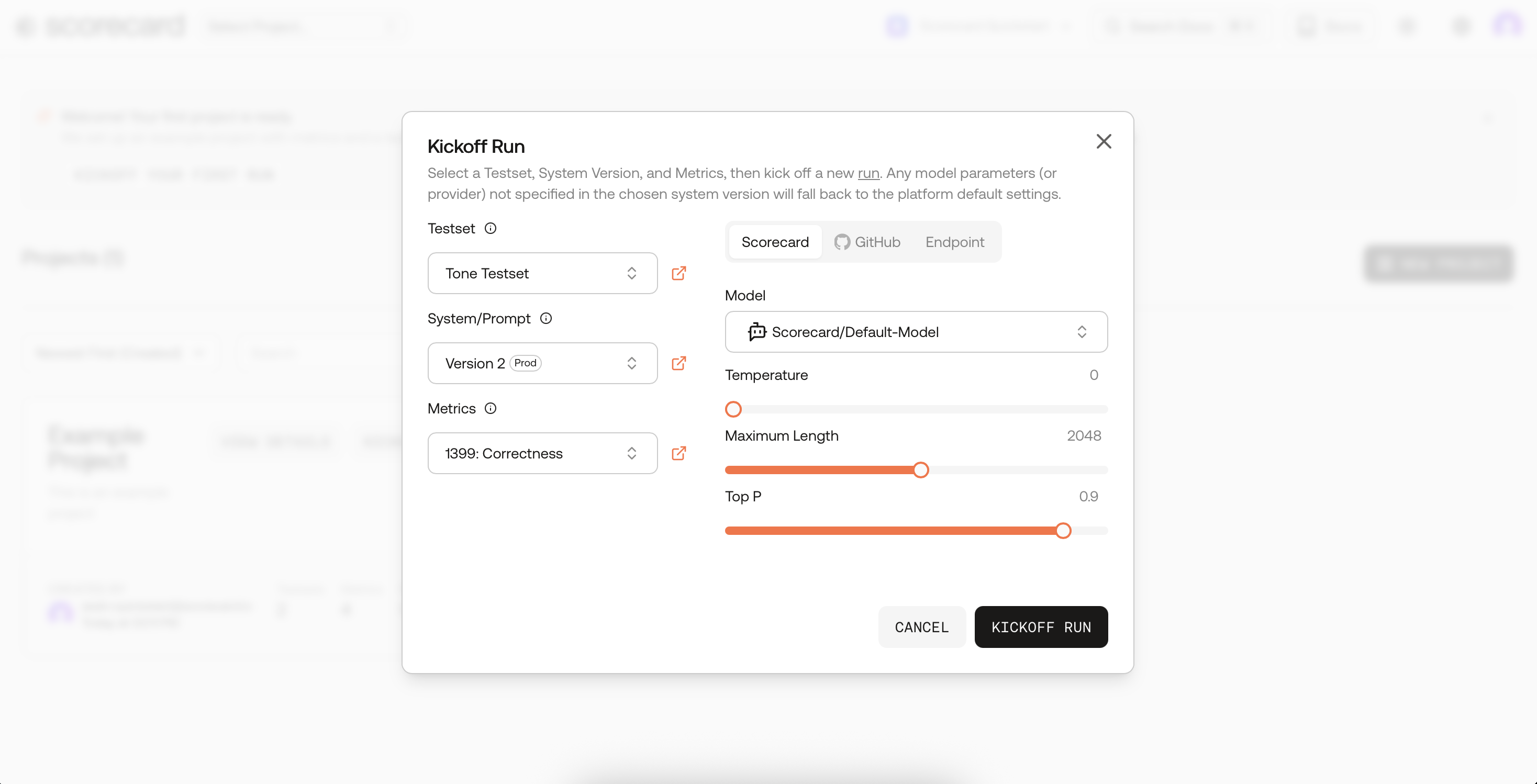

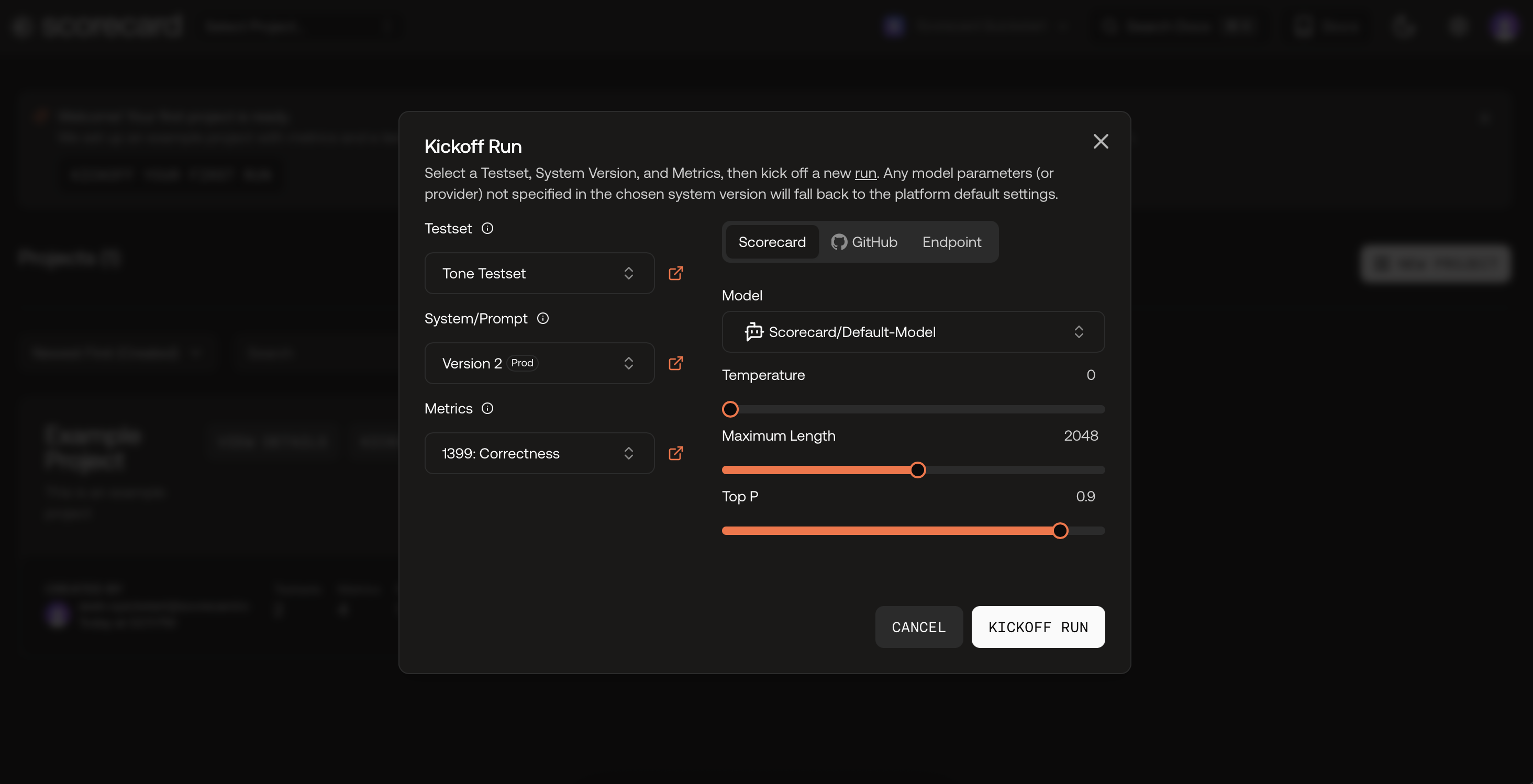

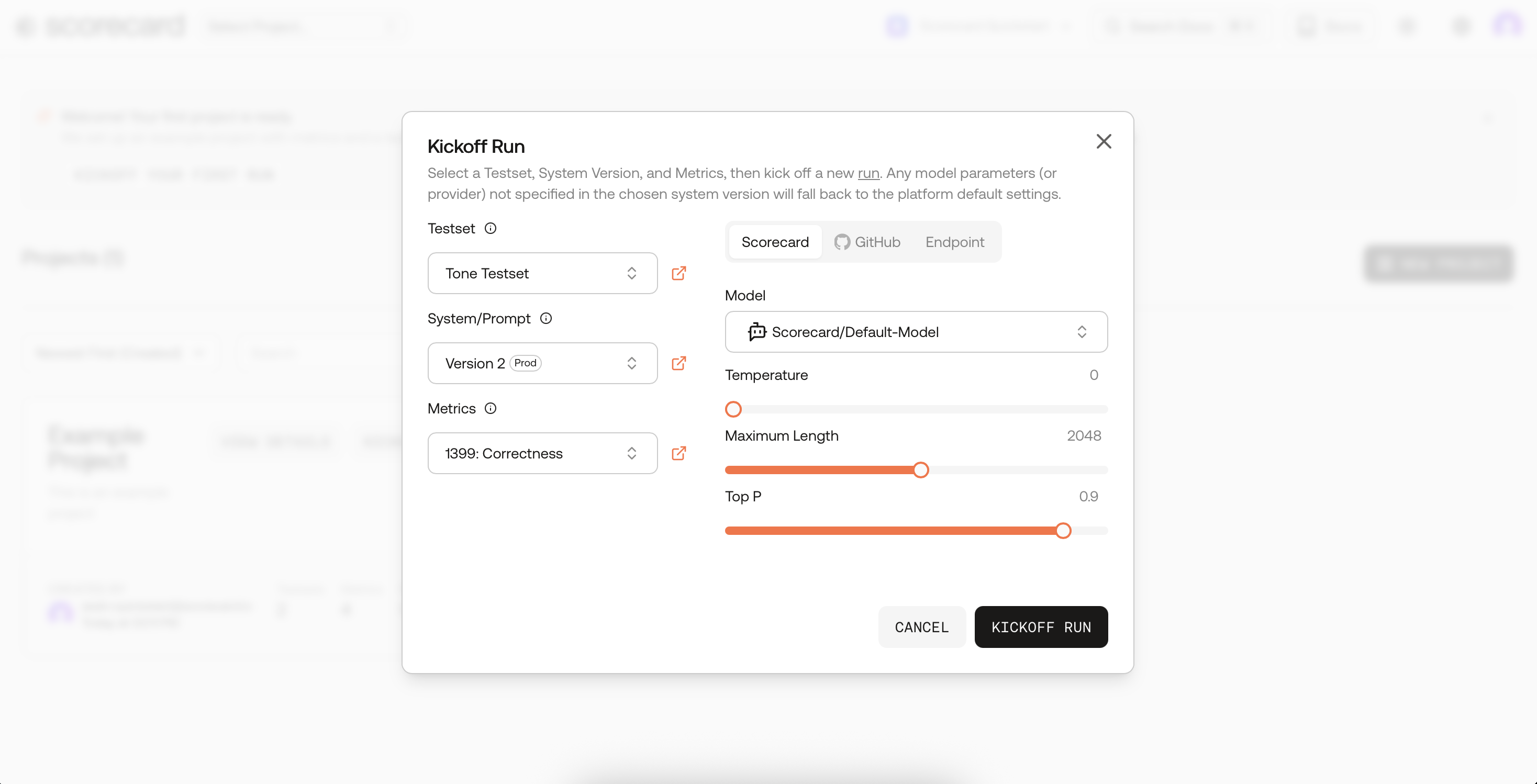

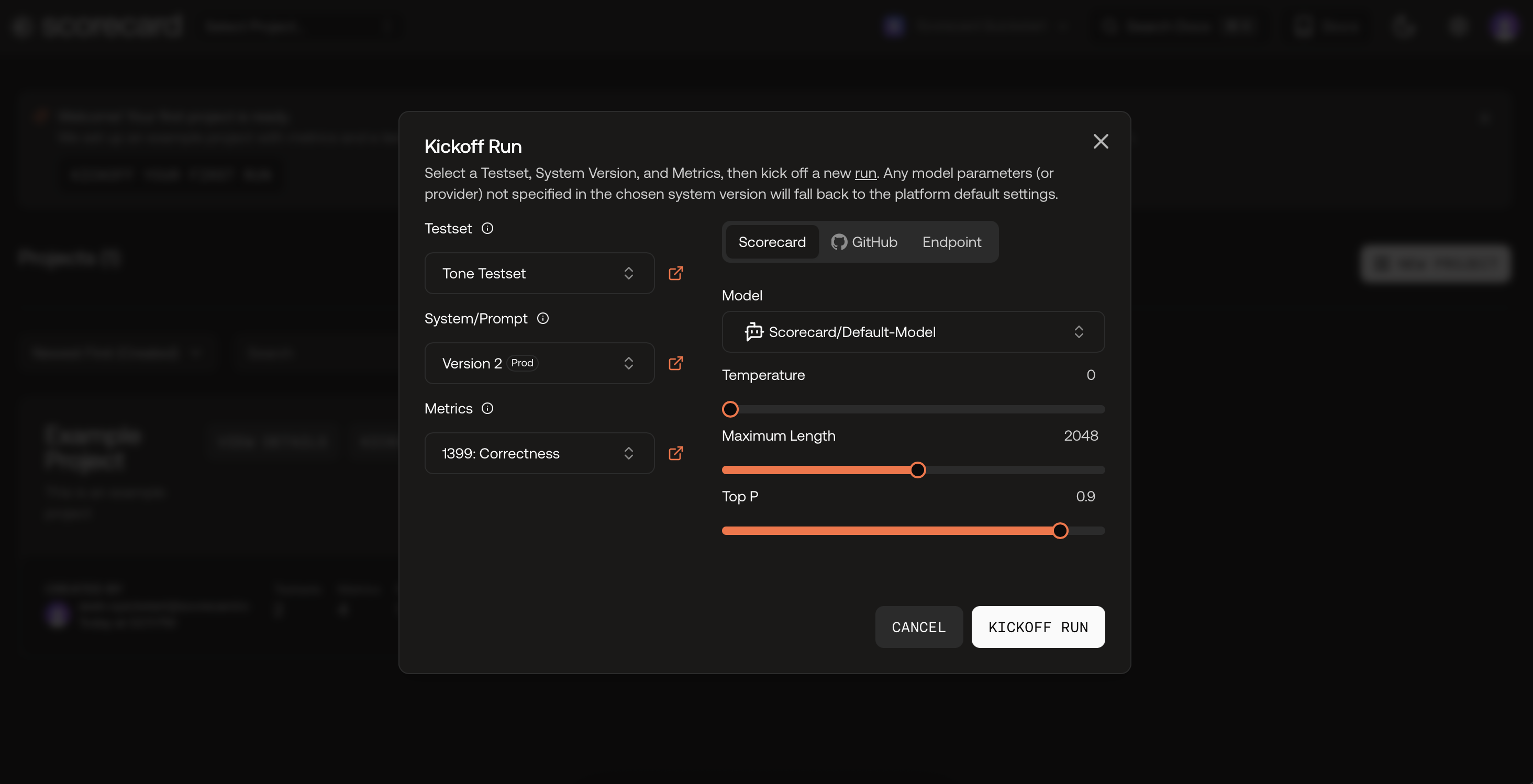

Open the Example Project and click Kickoff Run

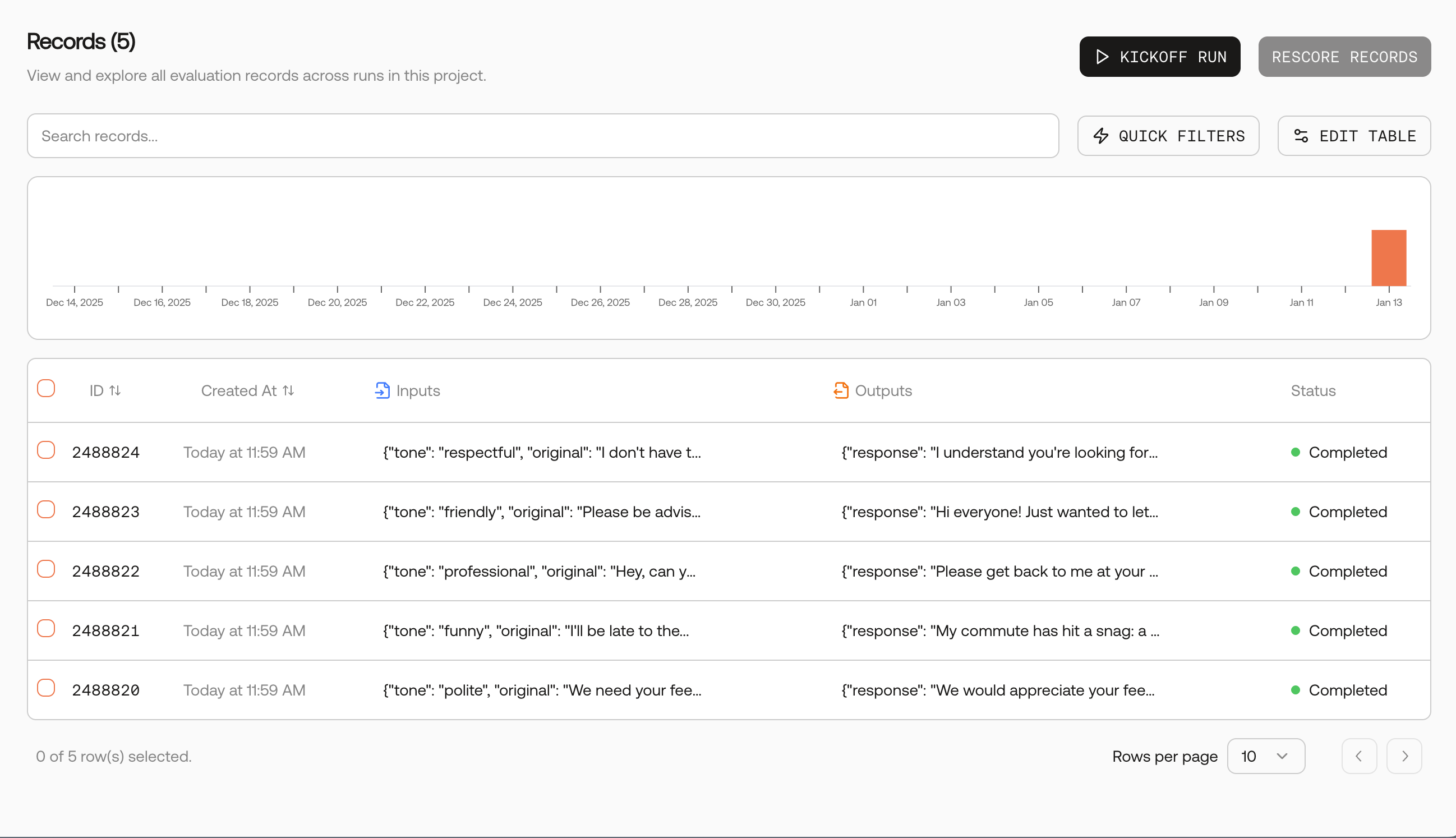

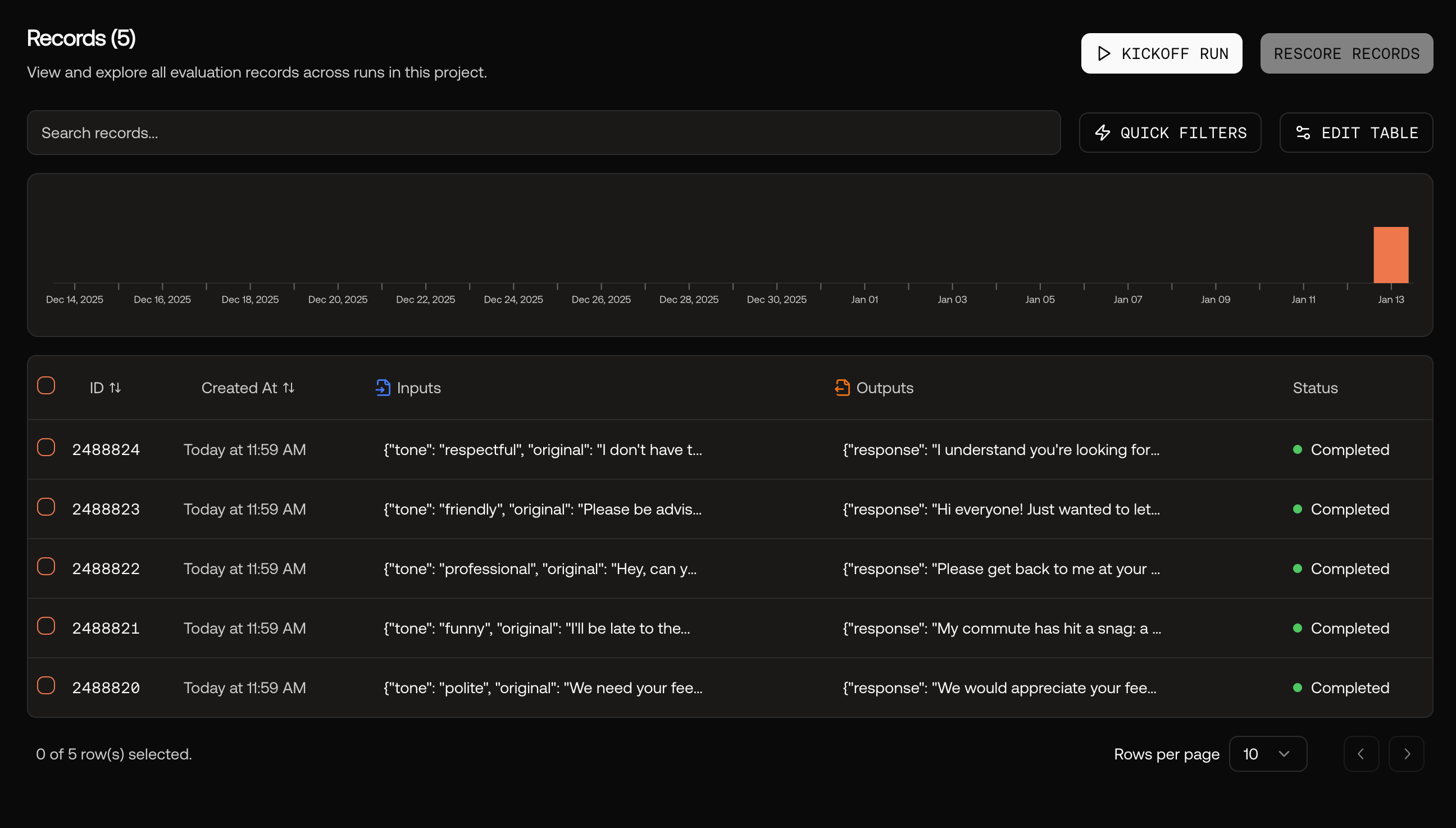

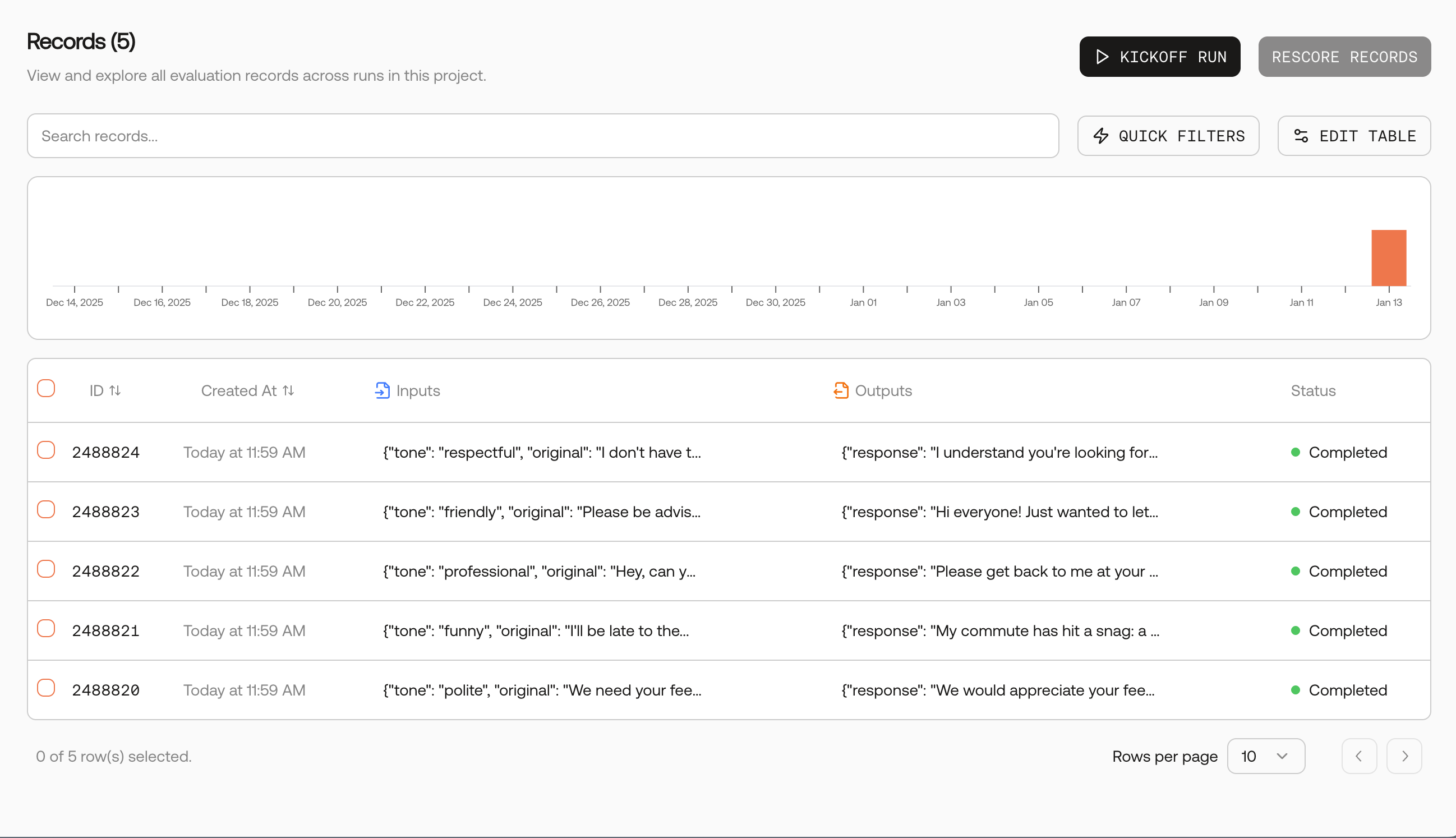

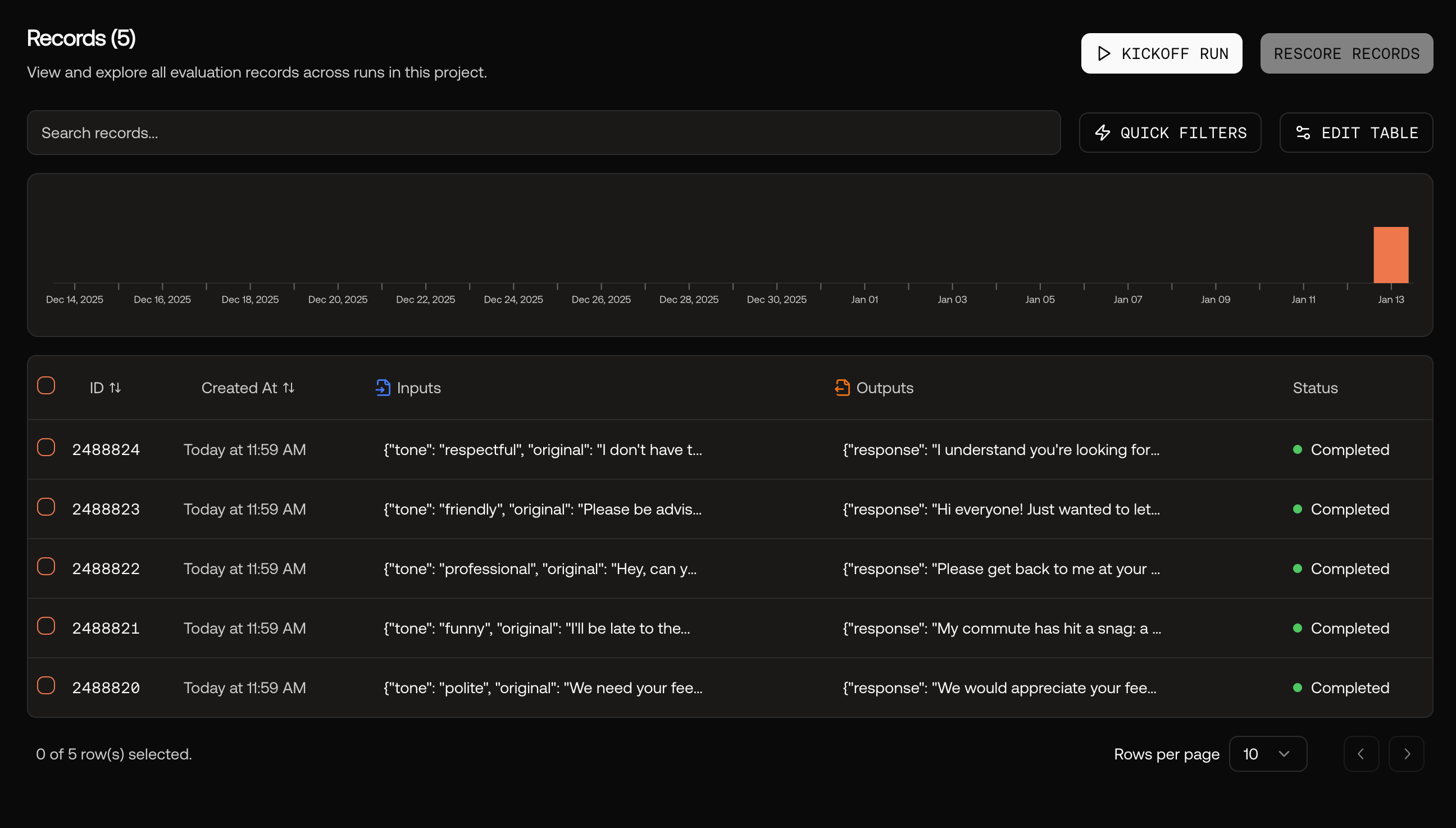

After org creation, navigate to the Example Project’s Records page. Click the Kickoff Run button in the top right to open the Kickoff Run modal.

Kick off your first run

In the modal, you can use the default selected Testset, Agent Integration (Prompt, GitHub Action, or Endpoint), and Metrics.Click “Kickoff run” to create the run and automatically evaluate the system.

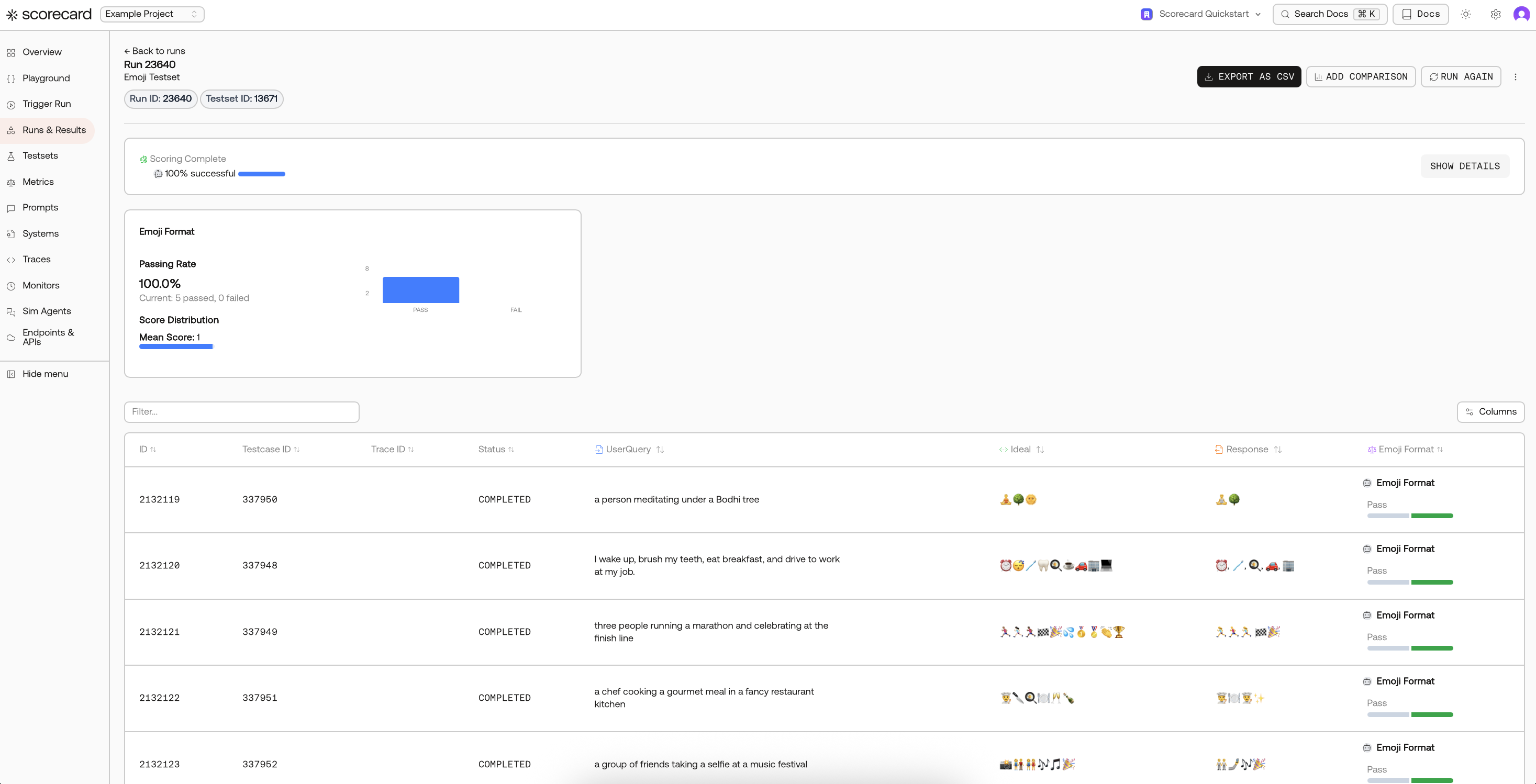

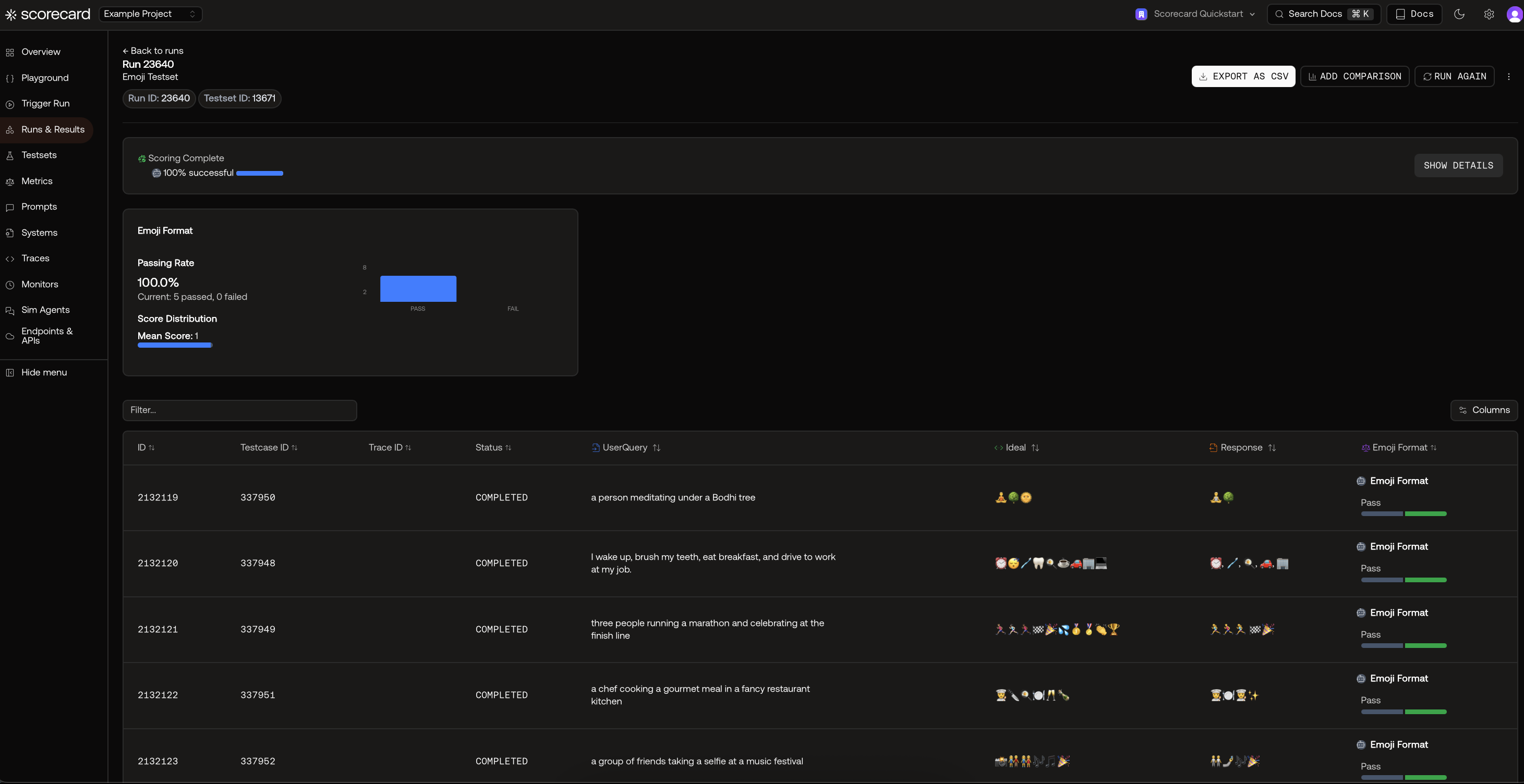

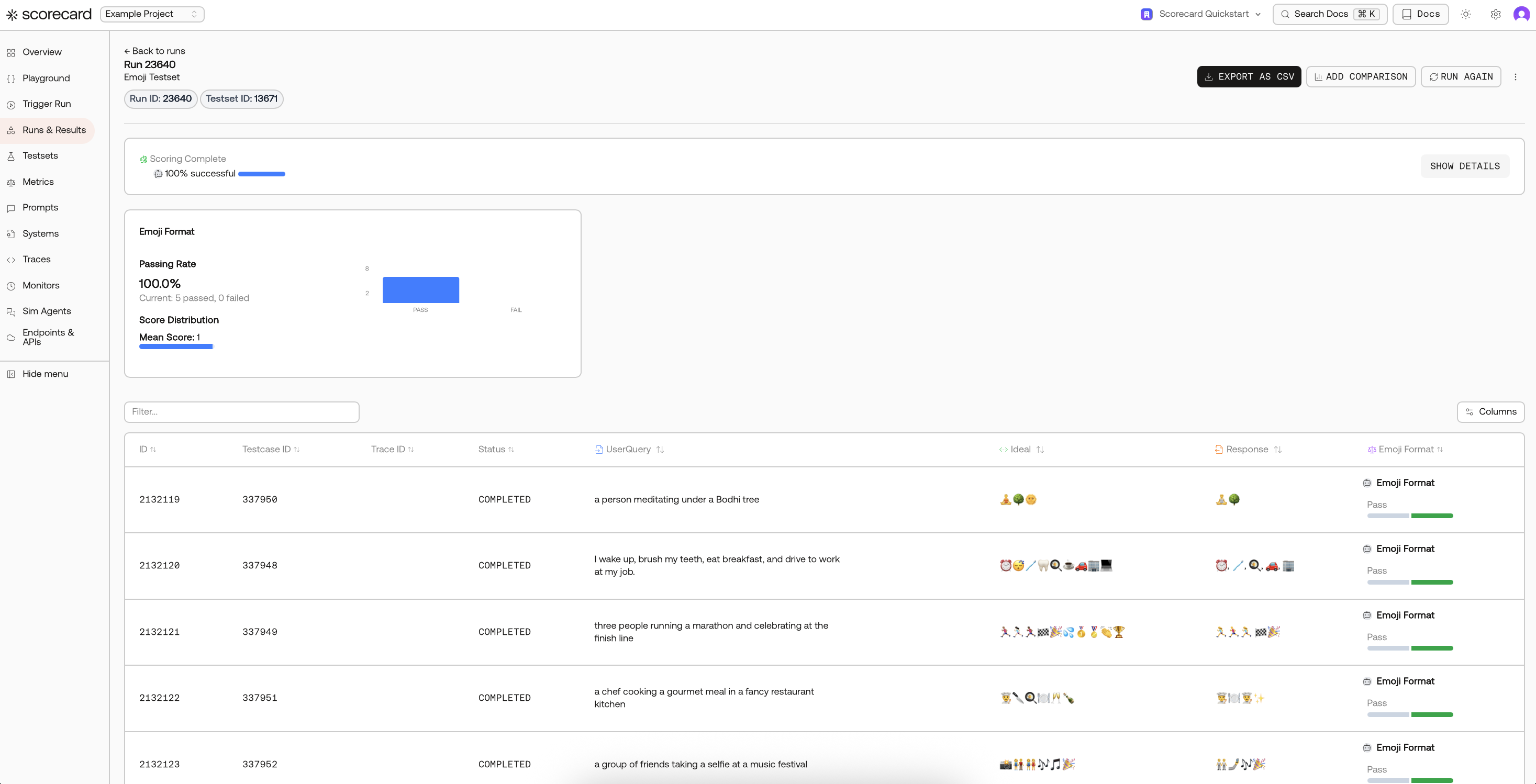

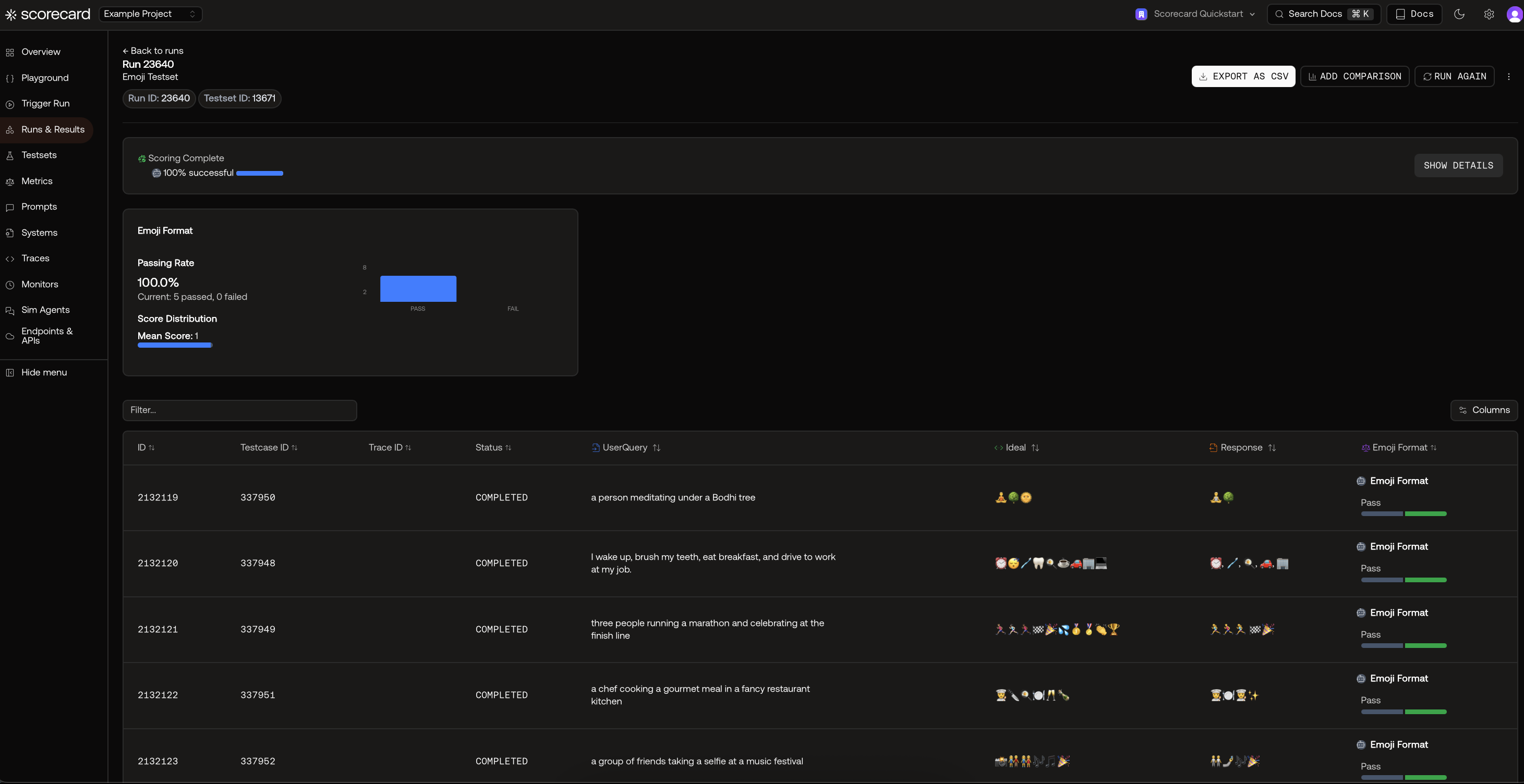

View results

After your run starts and scoring completes, open the results to see per-record scores, distributions, and explanations.

Click any record in the Records page to view individual testcase inputs, outputs, and score explanations.

Click any record in the Records page to view individual testcase inputs, outputs, and score explanations.

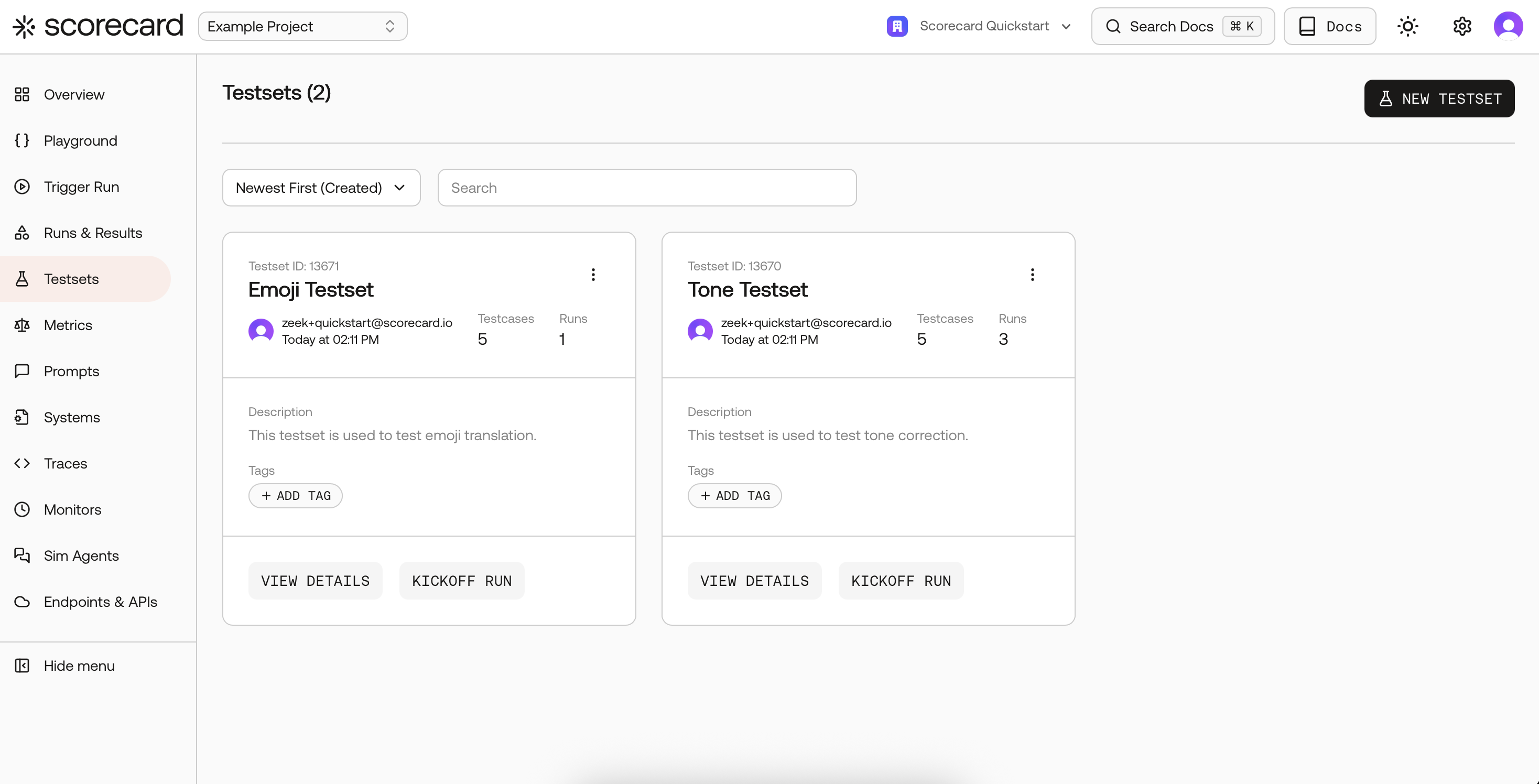

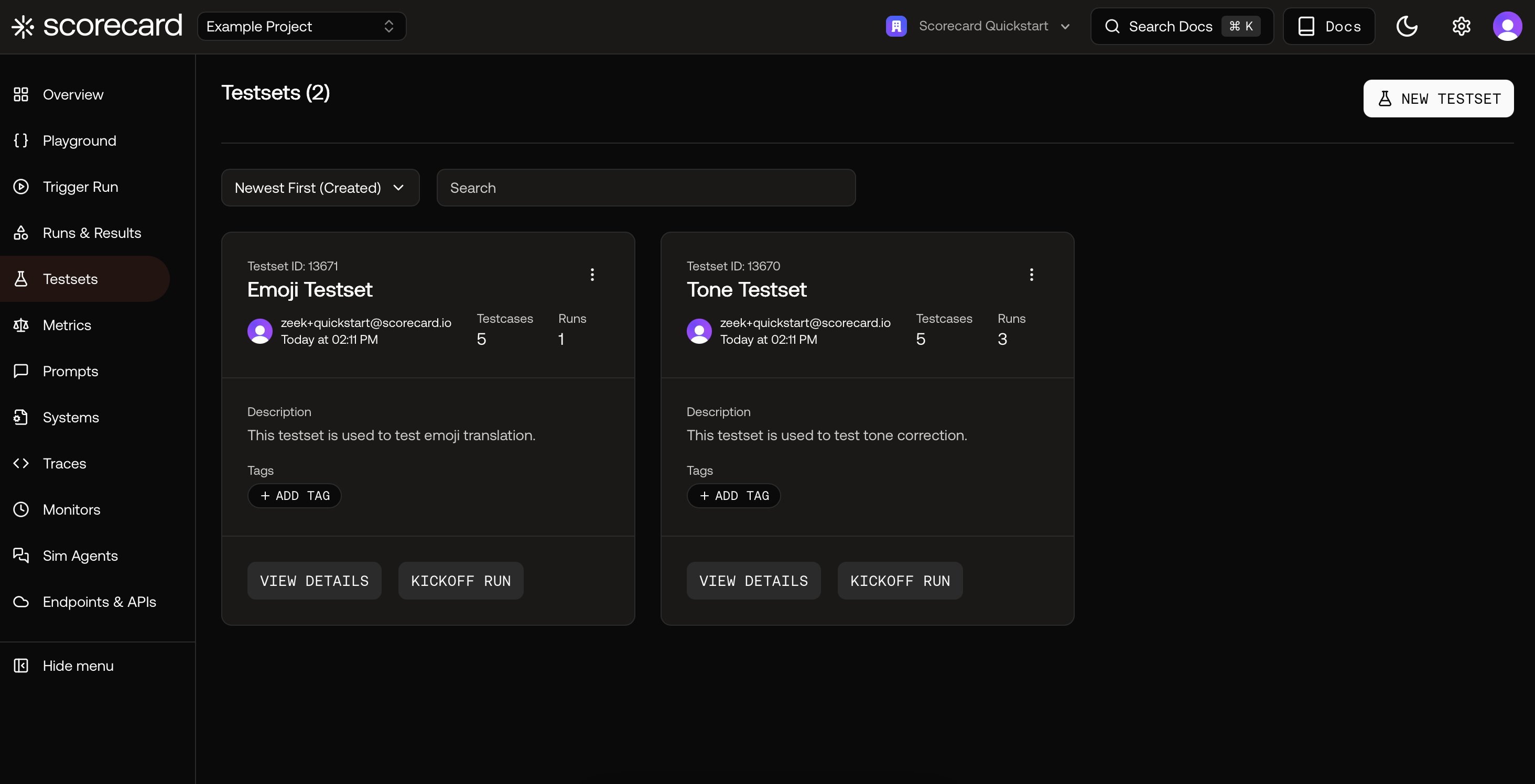

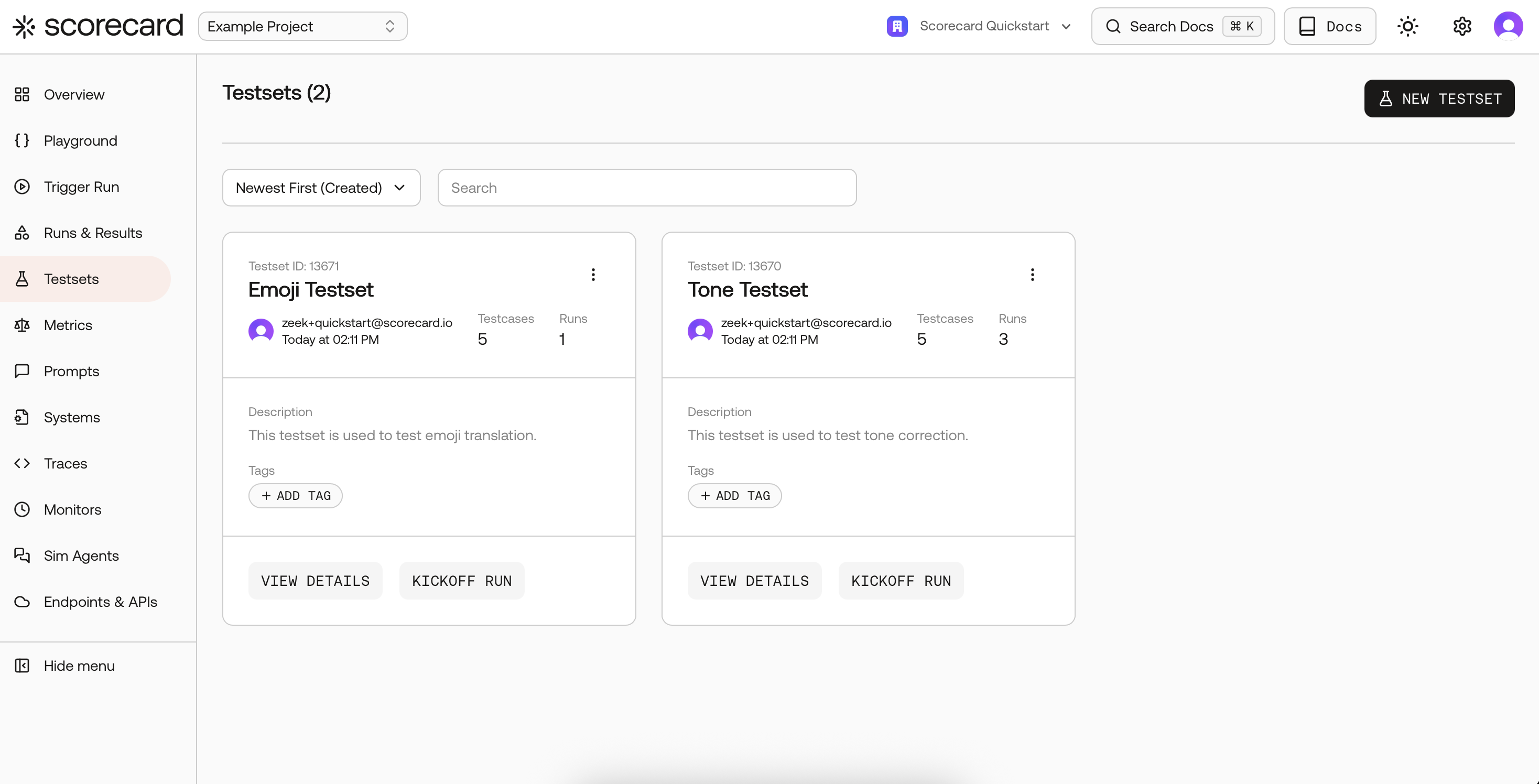

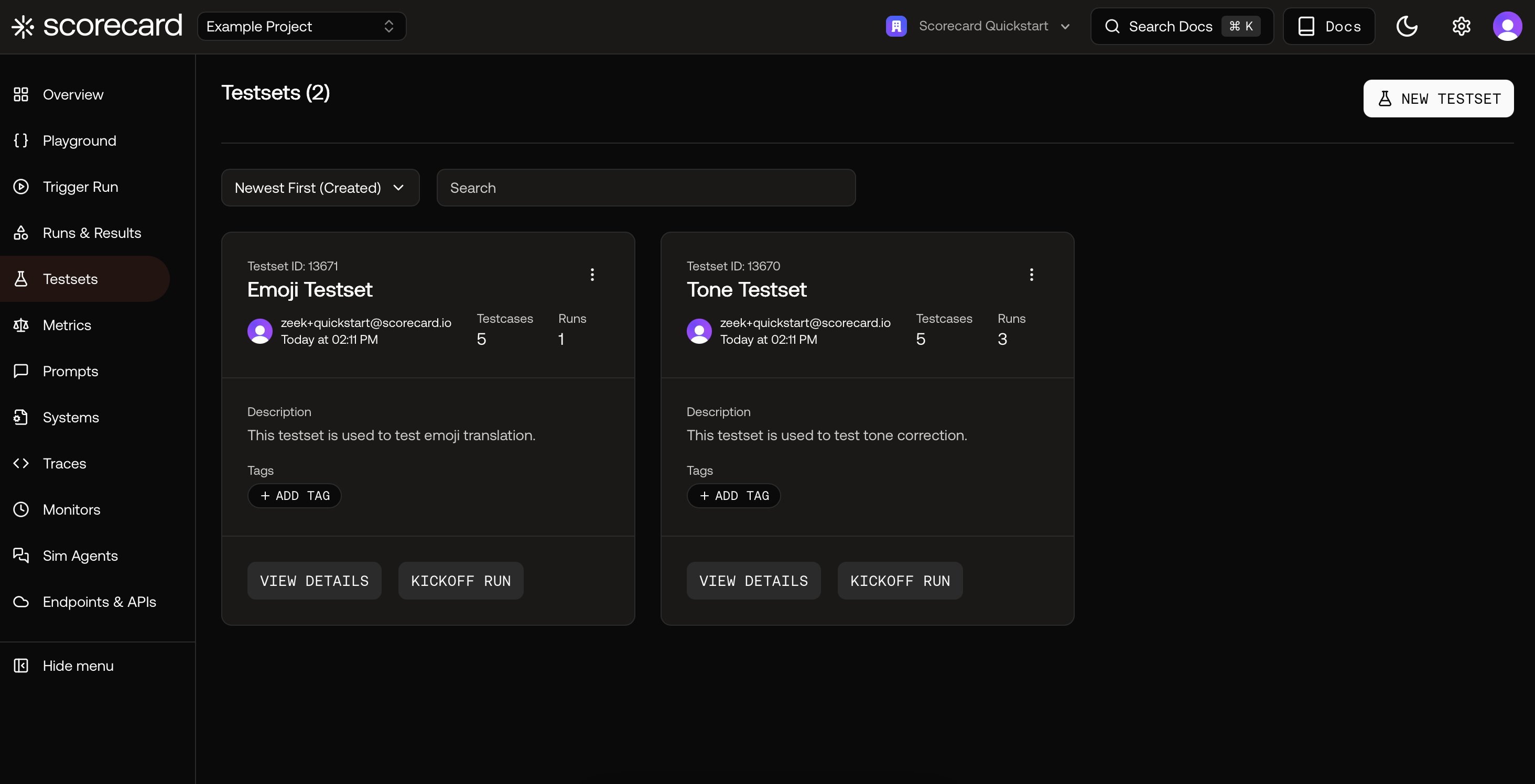

Browse the Example Project

Learn how the sample data is organized:

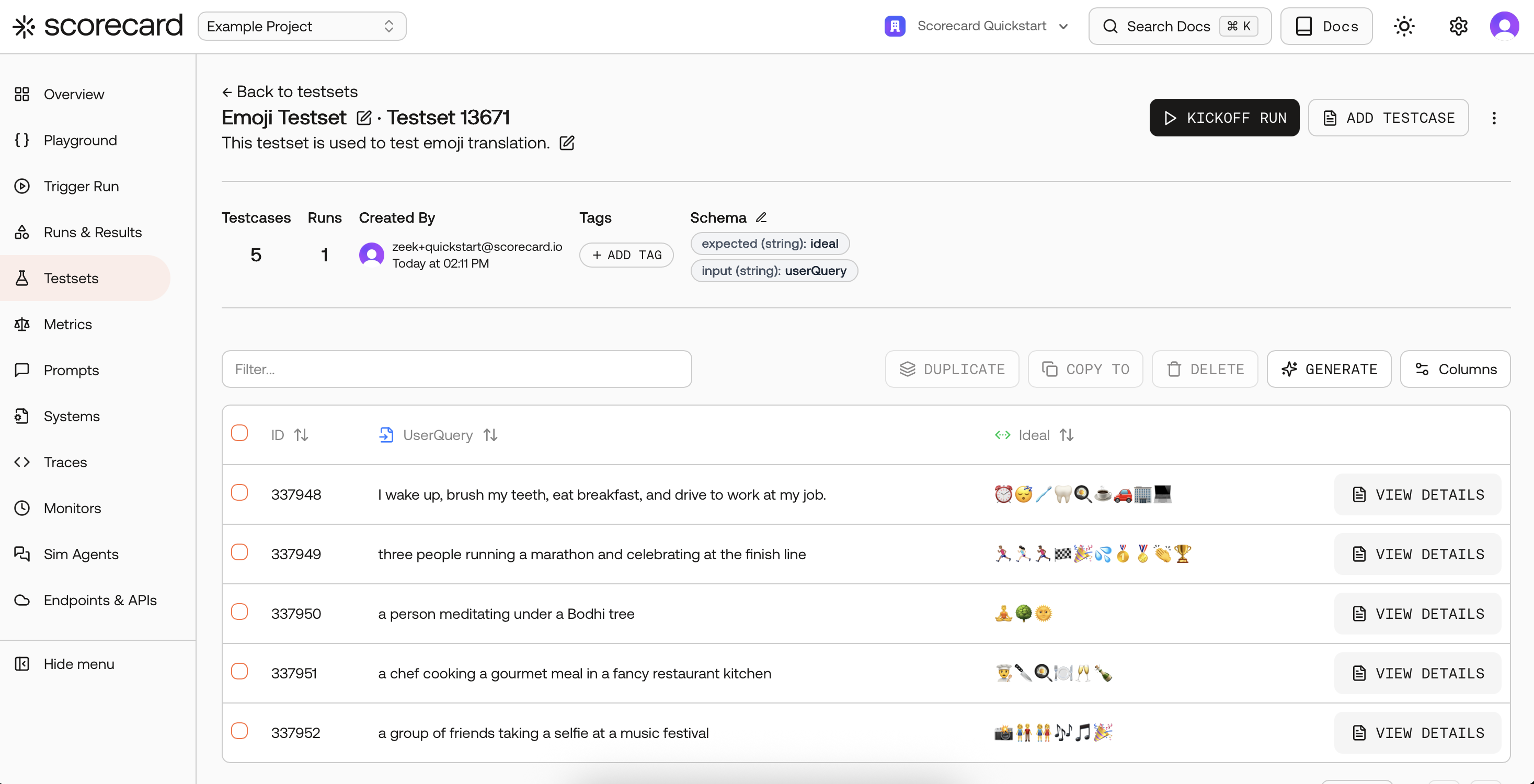

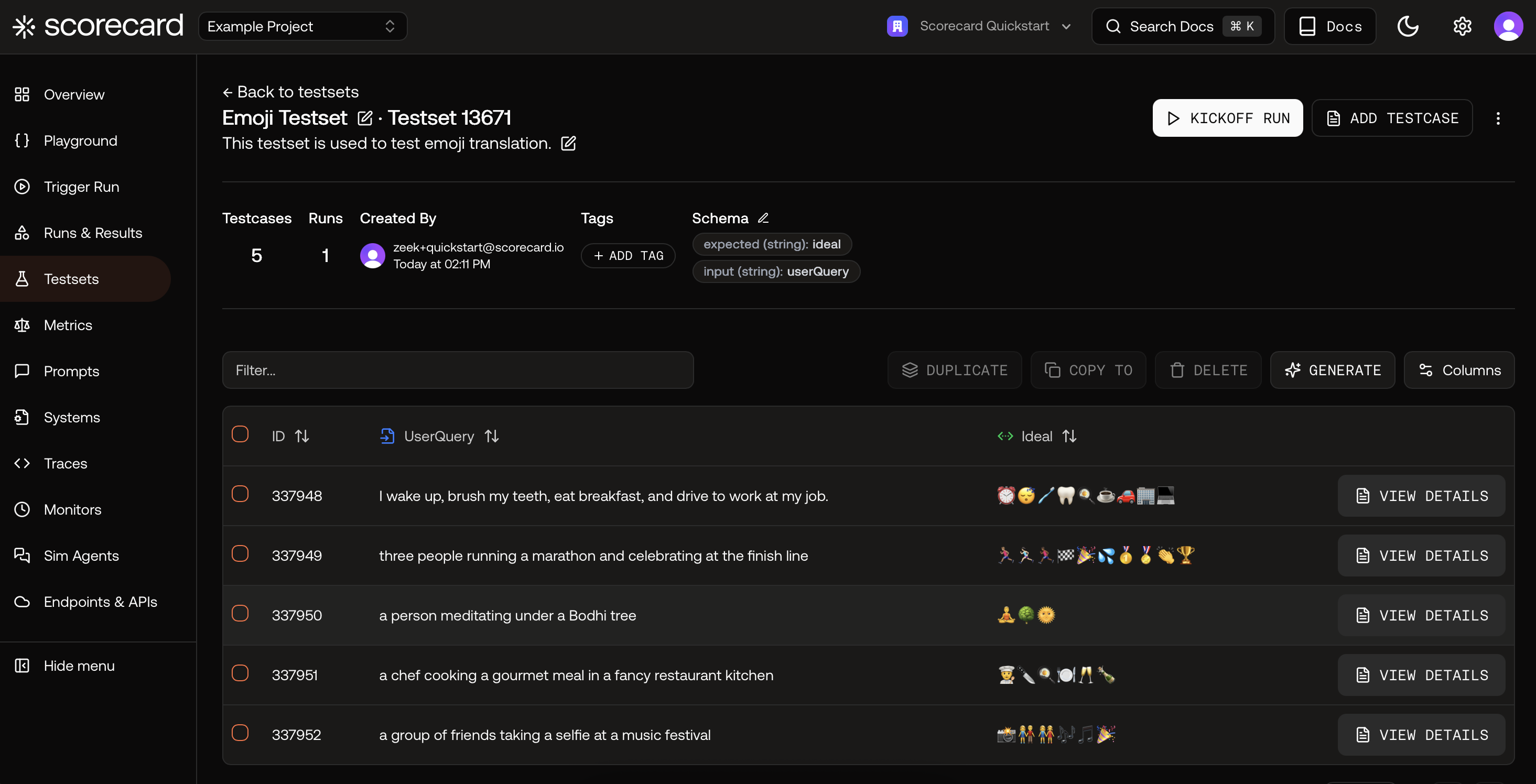

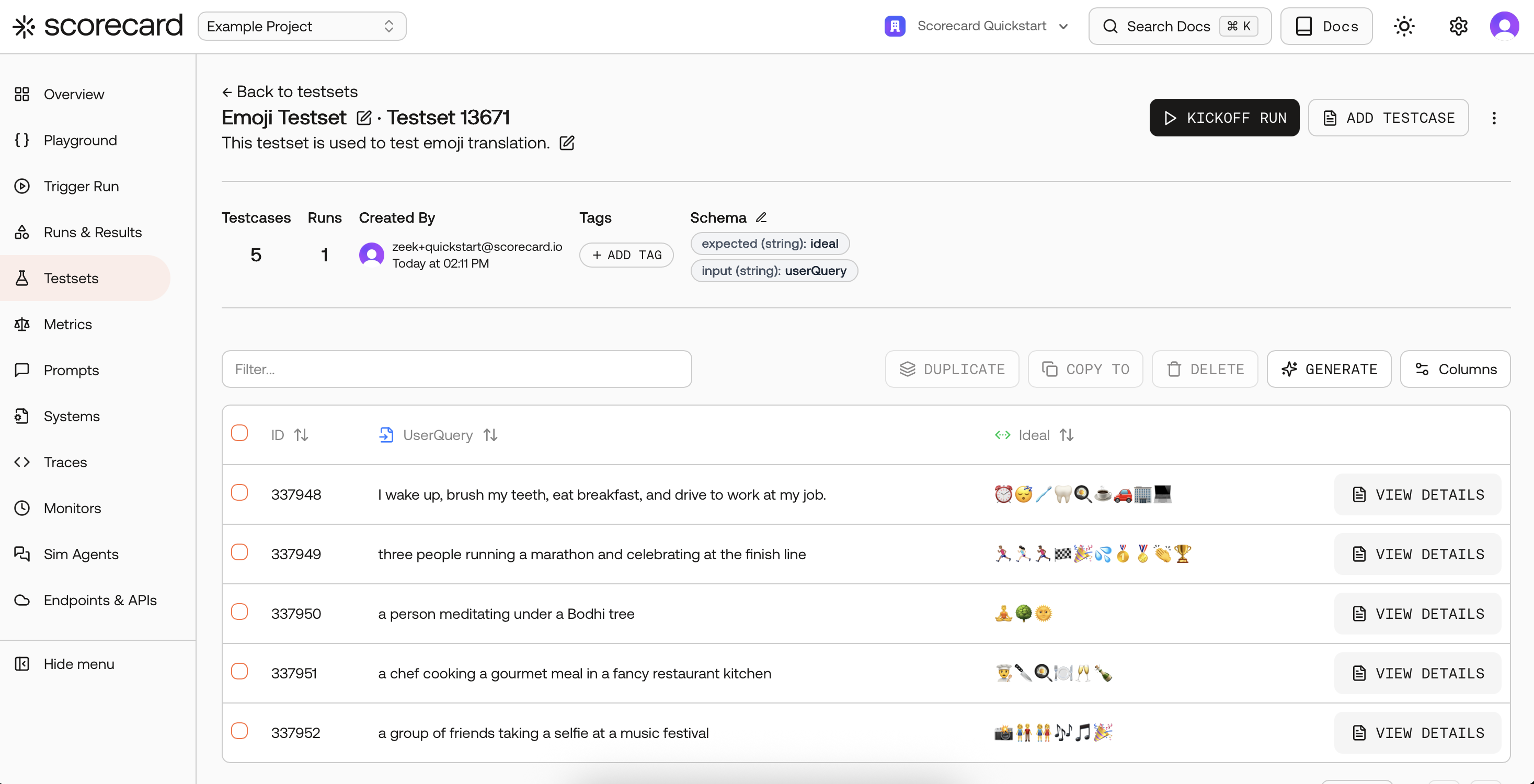

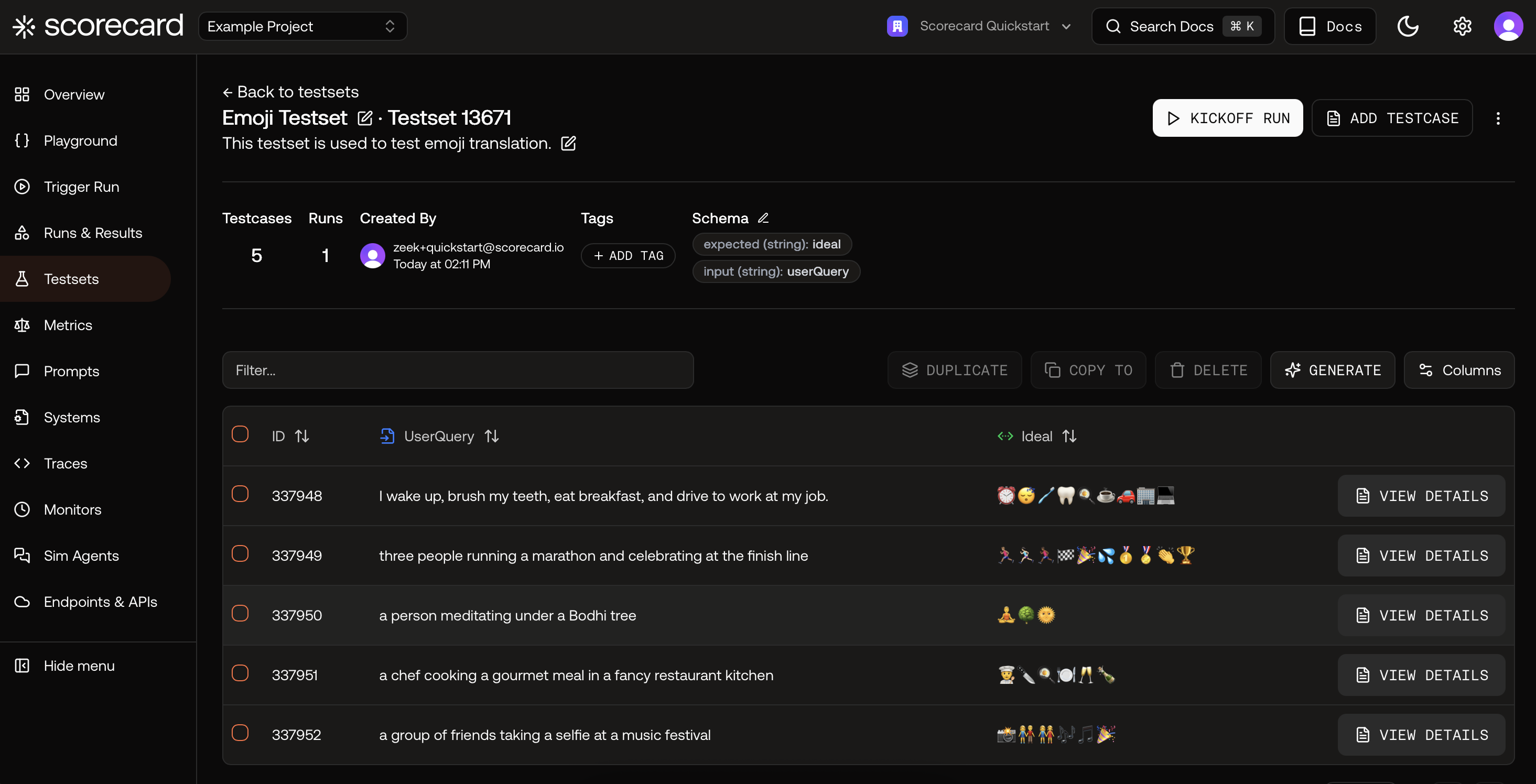

Open a Testset to see its schema and Testcases. Click a testcase row to view its inputs and expected outputs.

Open a Testset to see its schema and Testcases. Click a testcase row to view its inputs and expected outputs.

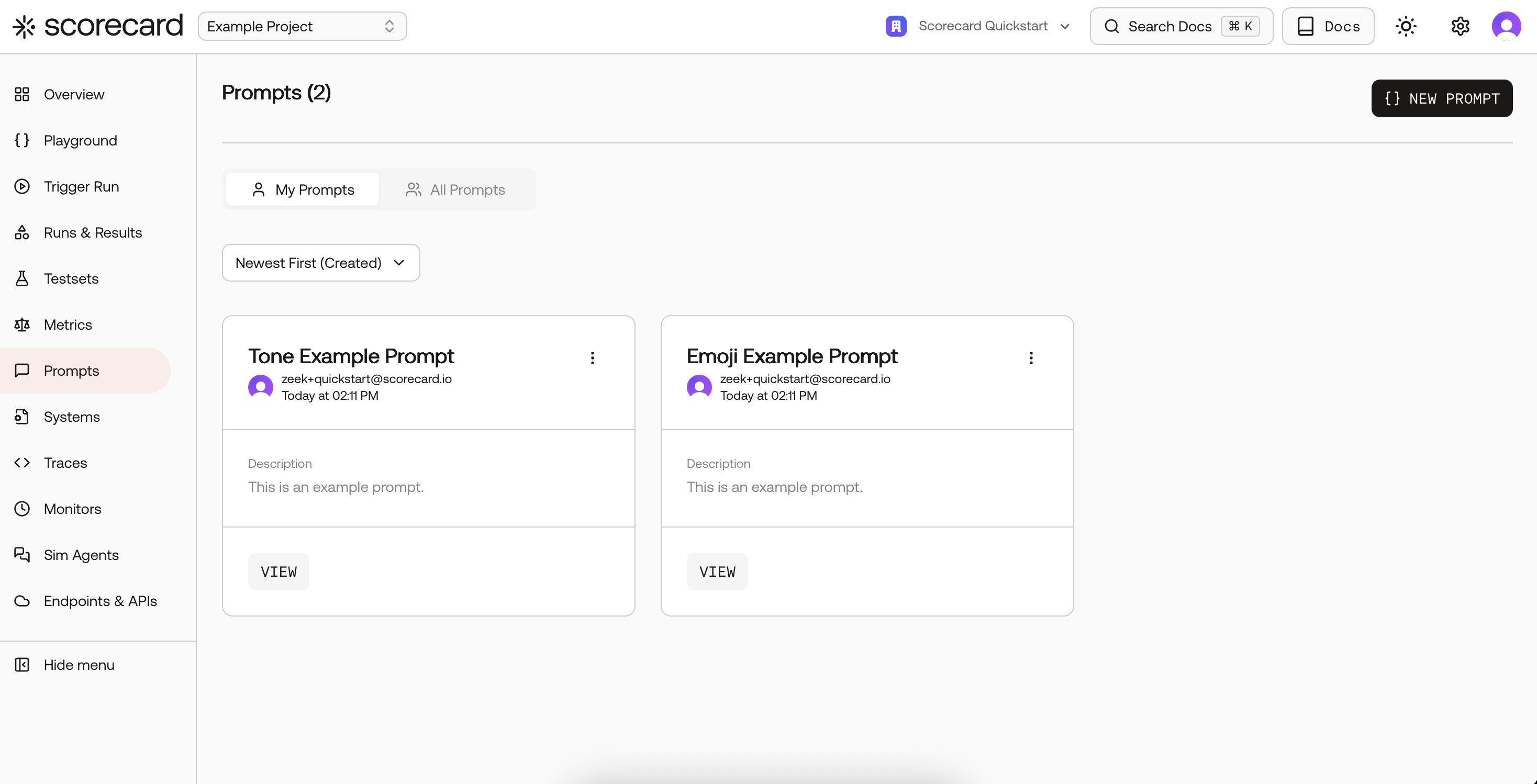

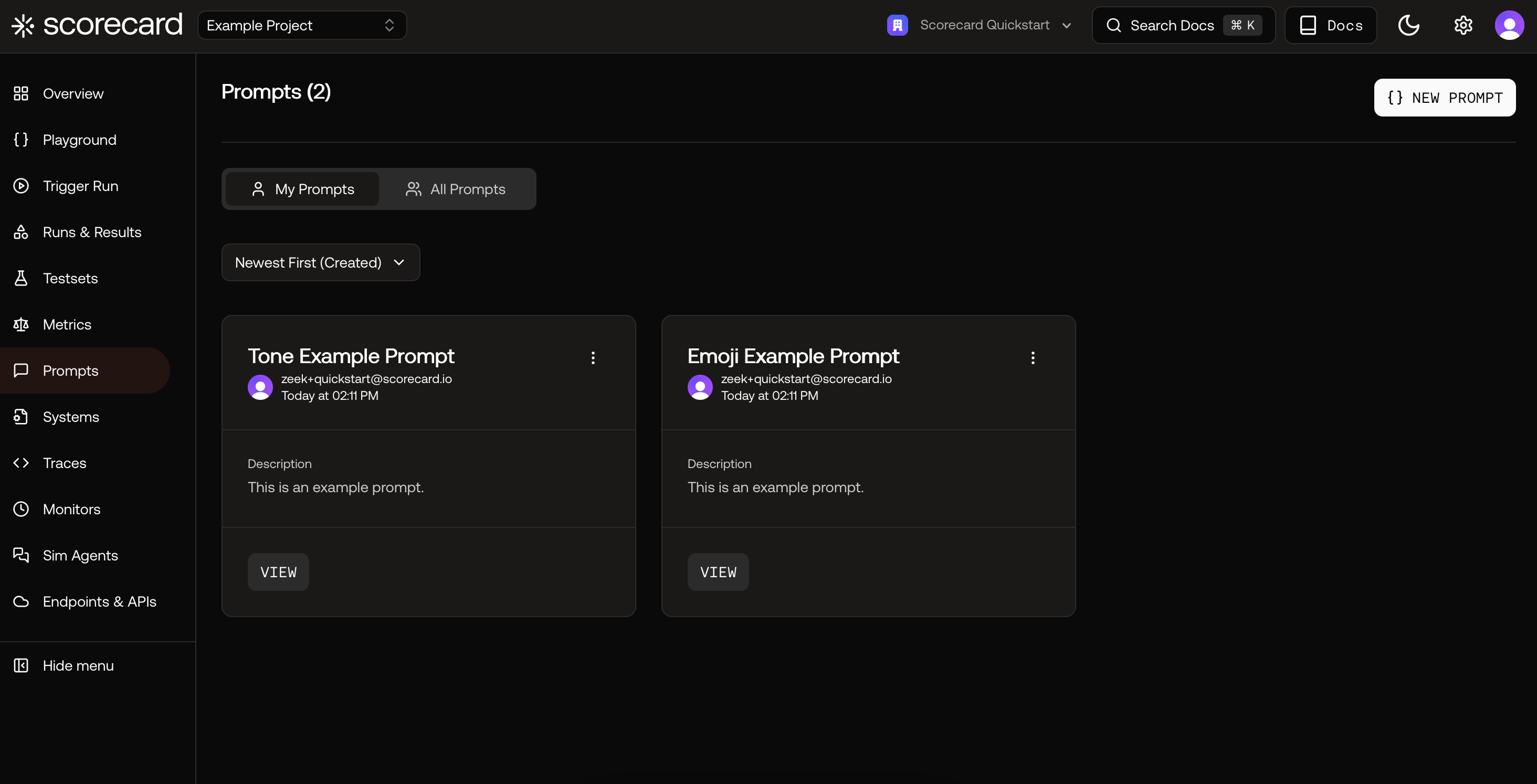

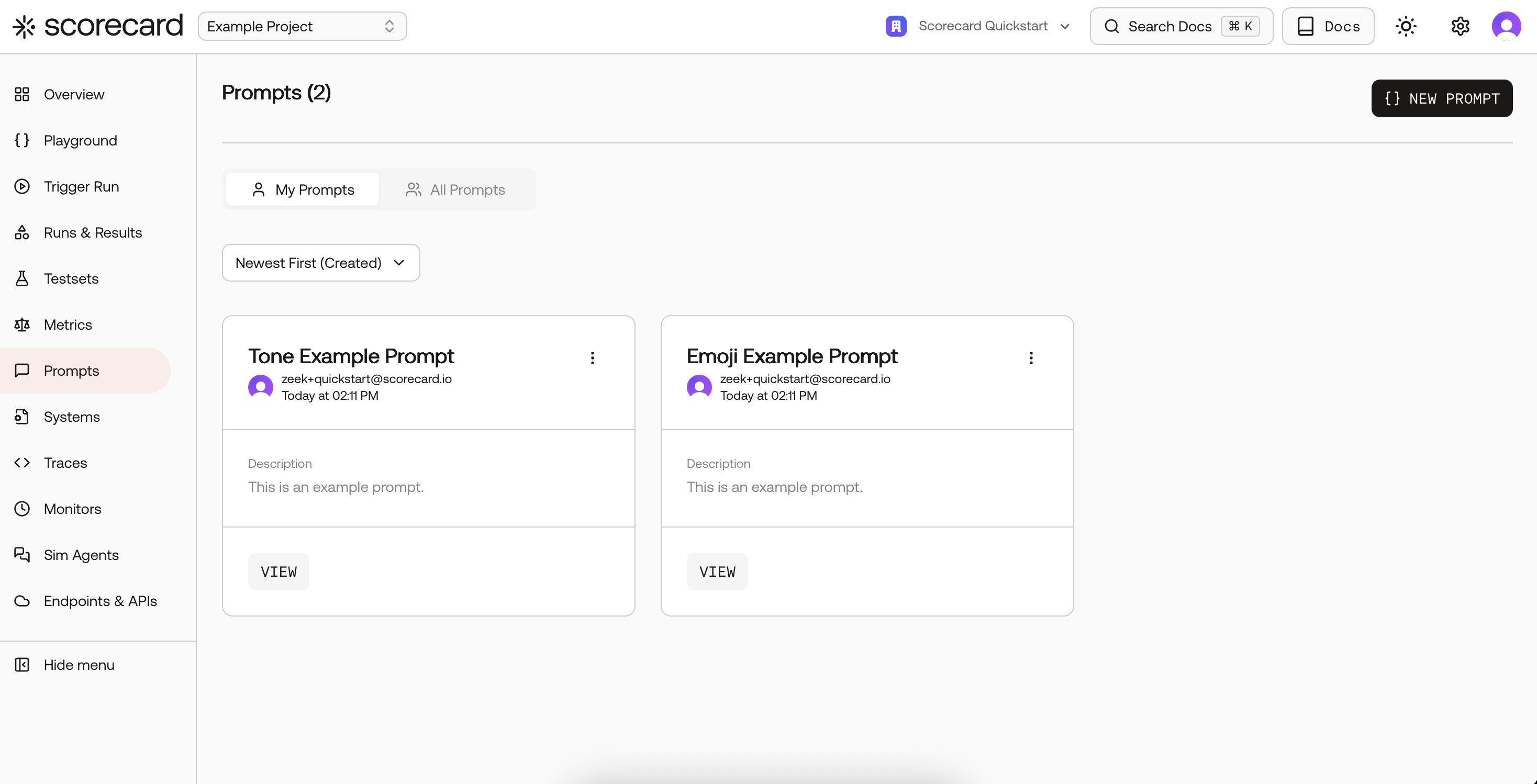

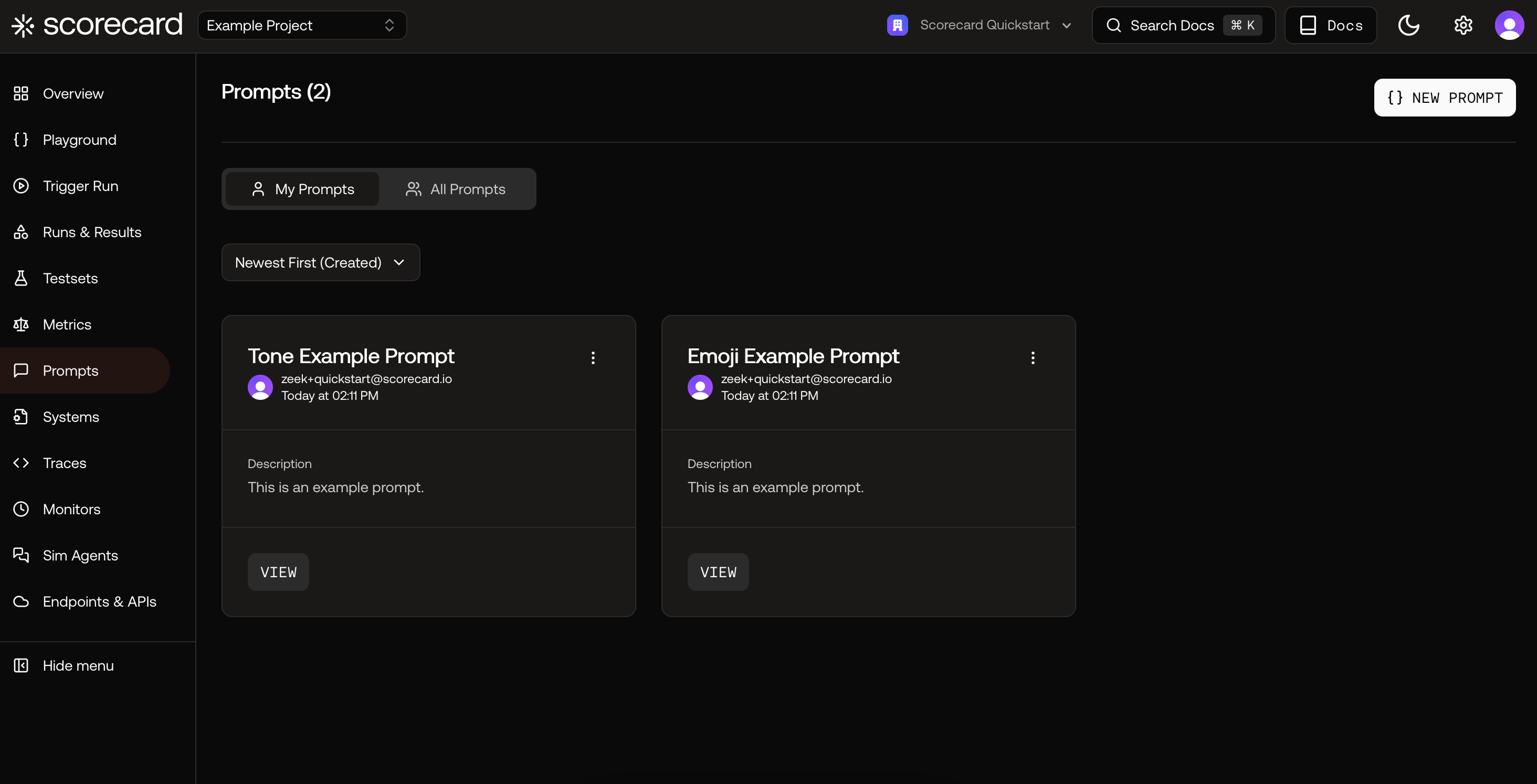

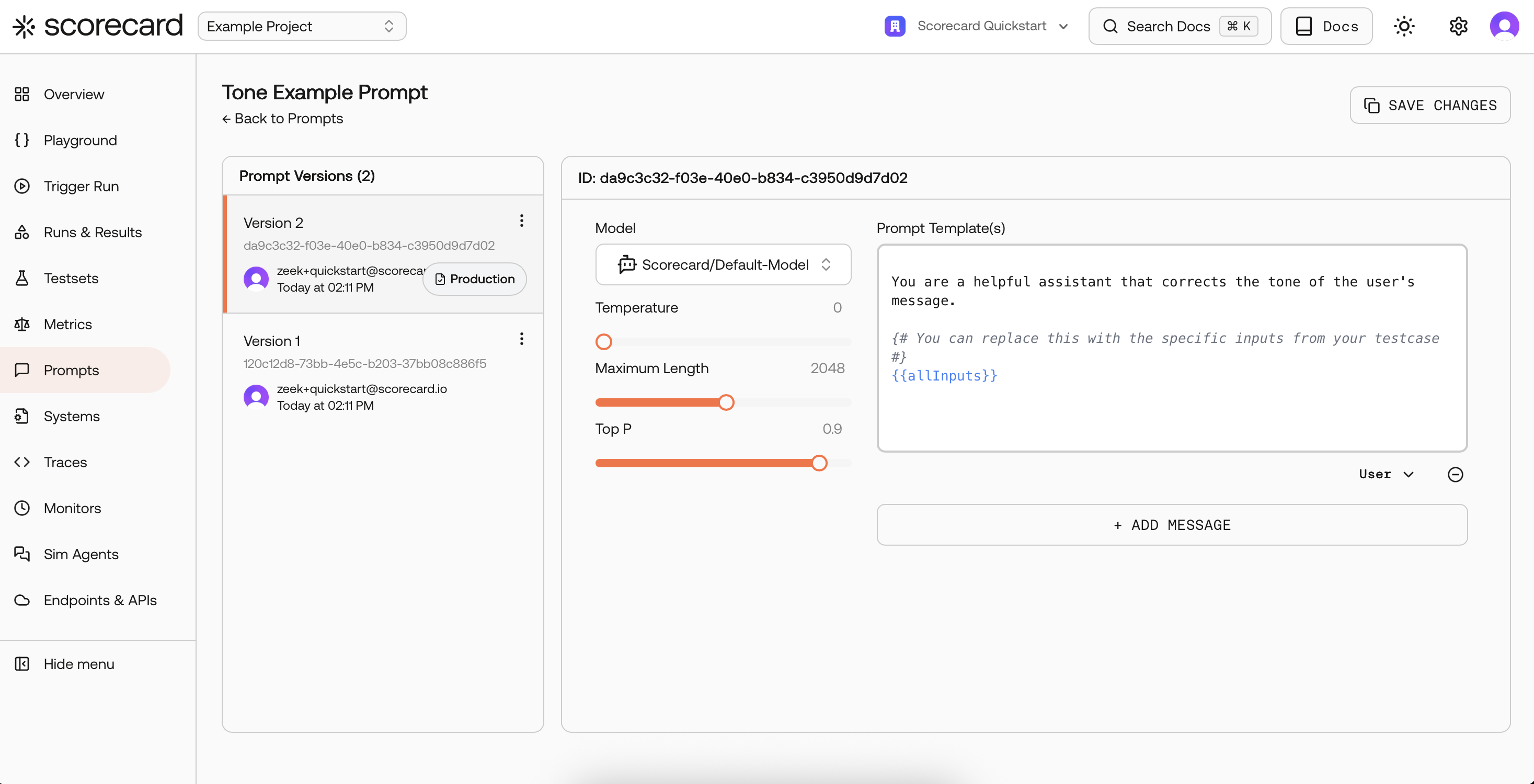

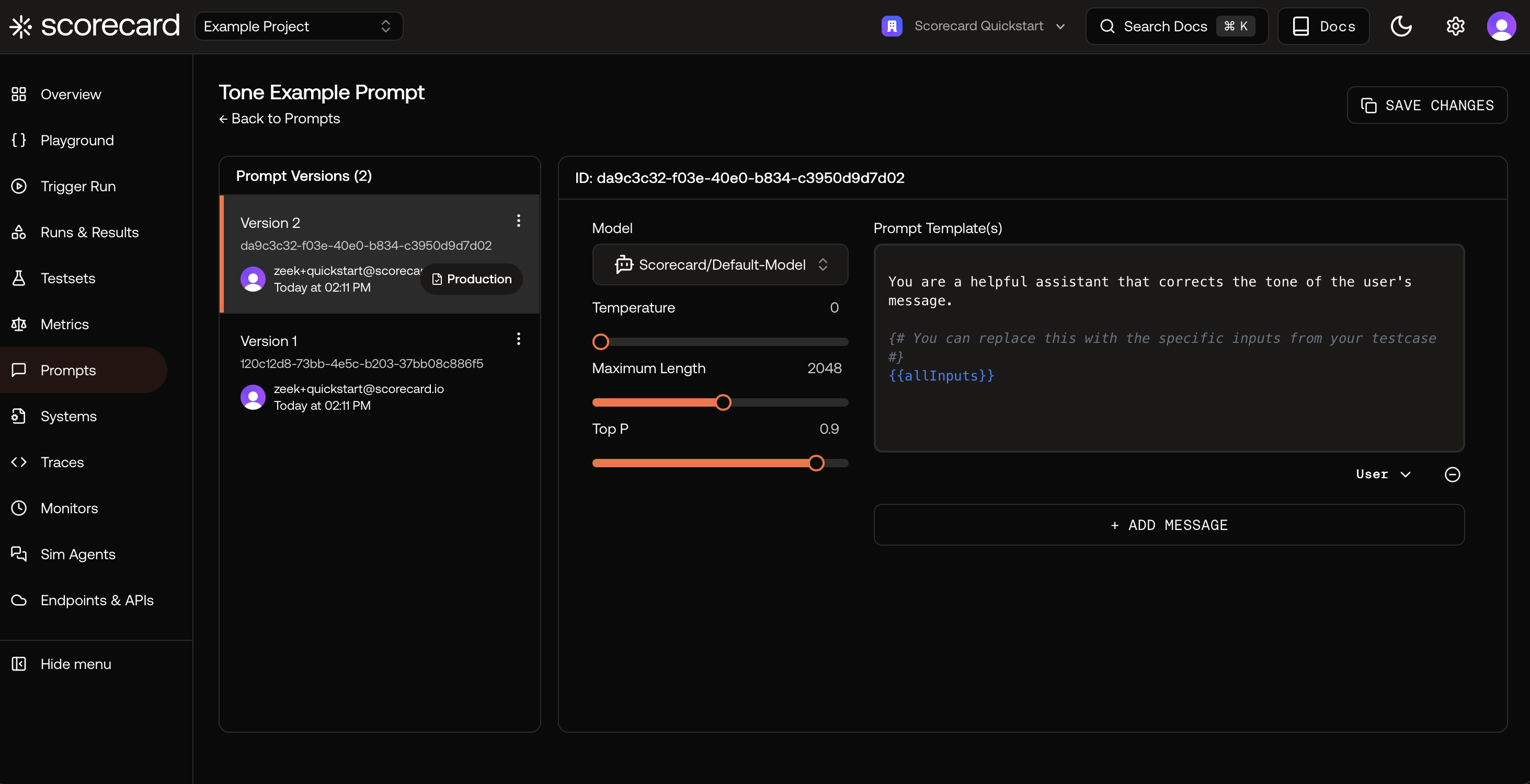

Next, browse Prompts. Use “View” to open a prompt, review messages, and model settings.

Next, browse Prompts. Use “View” to open a prompt, review messages, and model settings.

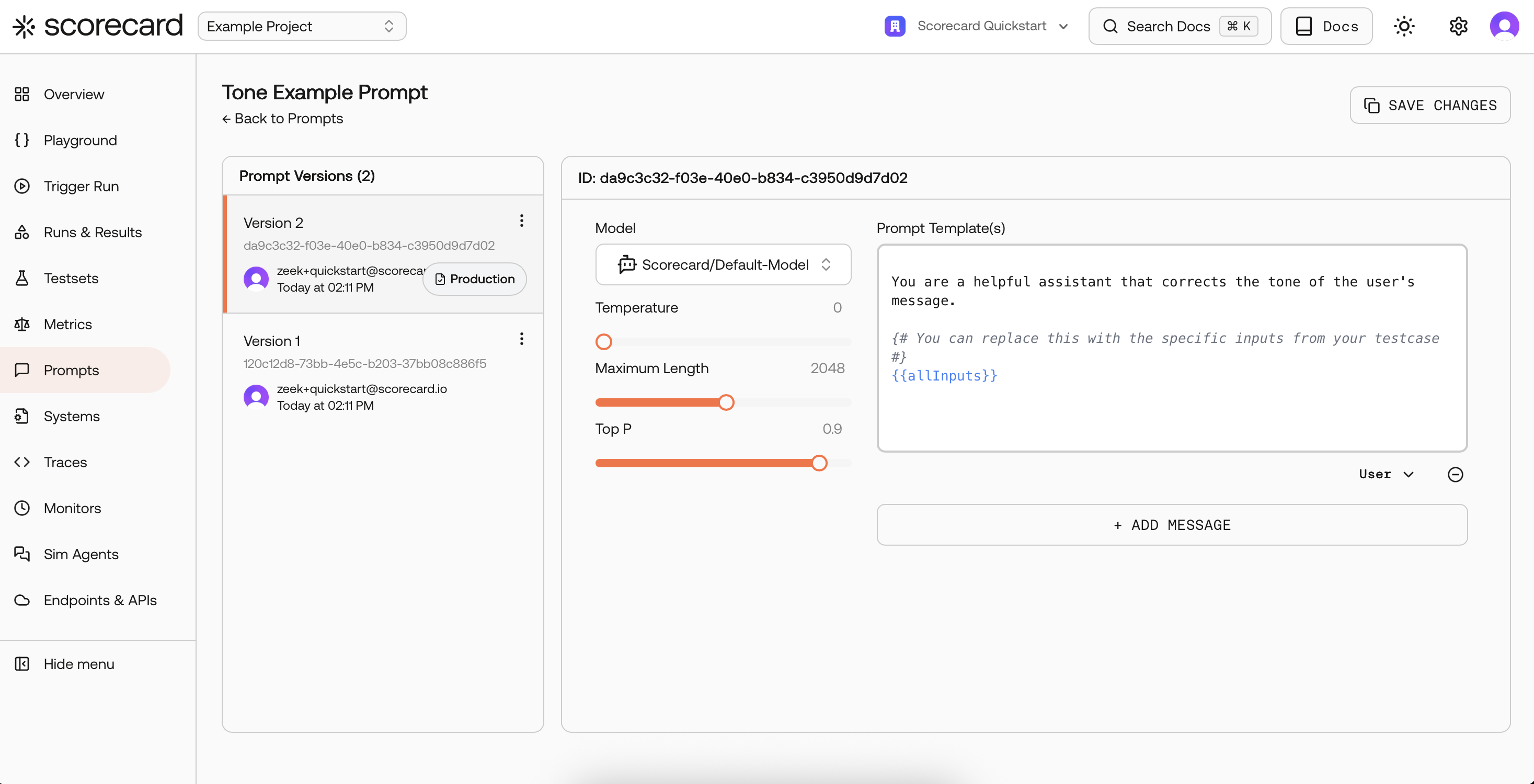

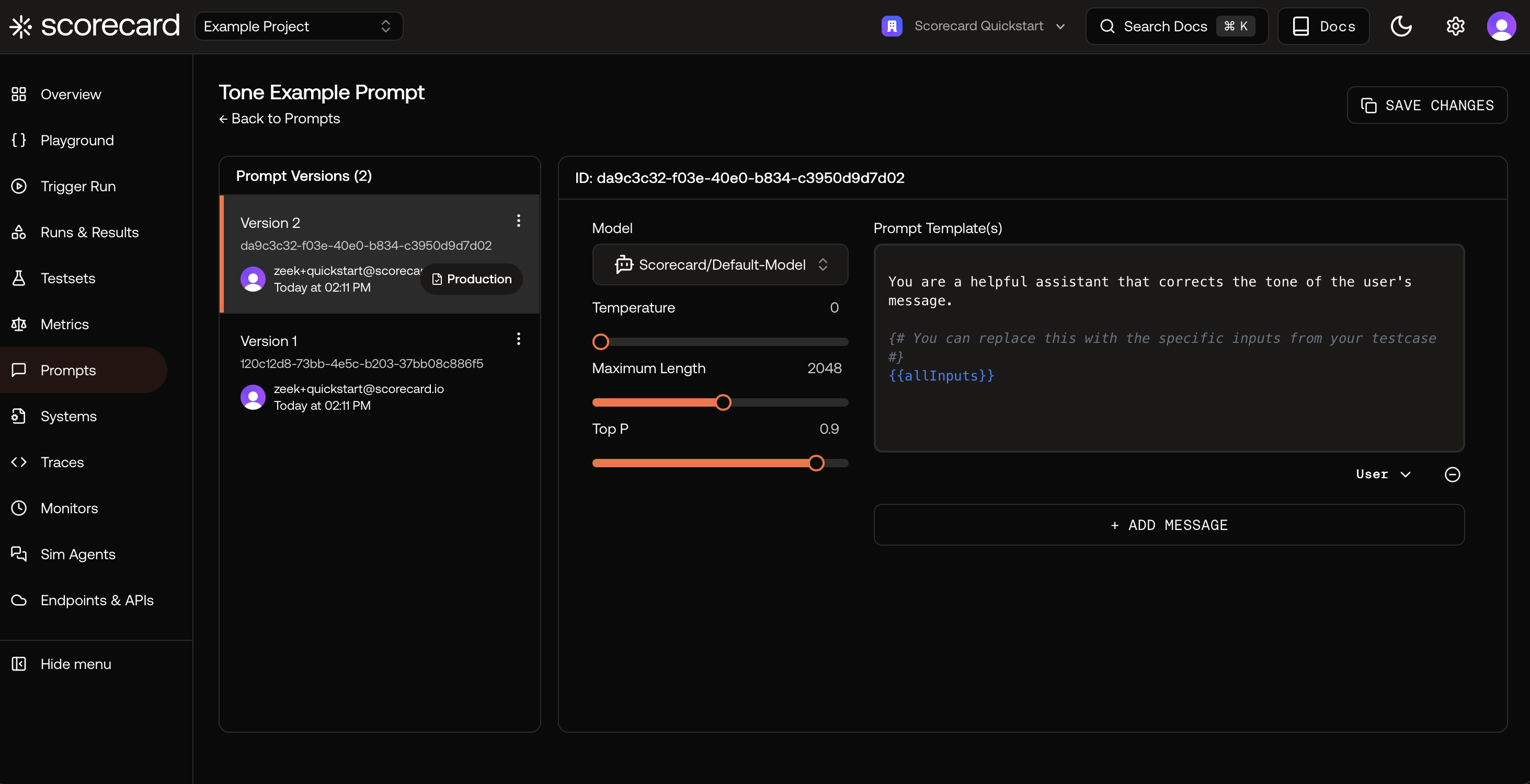

Inside a prompt version, see the template (Jinja-style variables) and evaluator model configuration.

Inside a prompt version, see the template (Jinja-style variables) and evaluator model configuration.

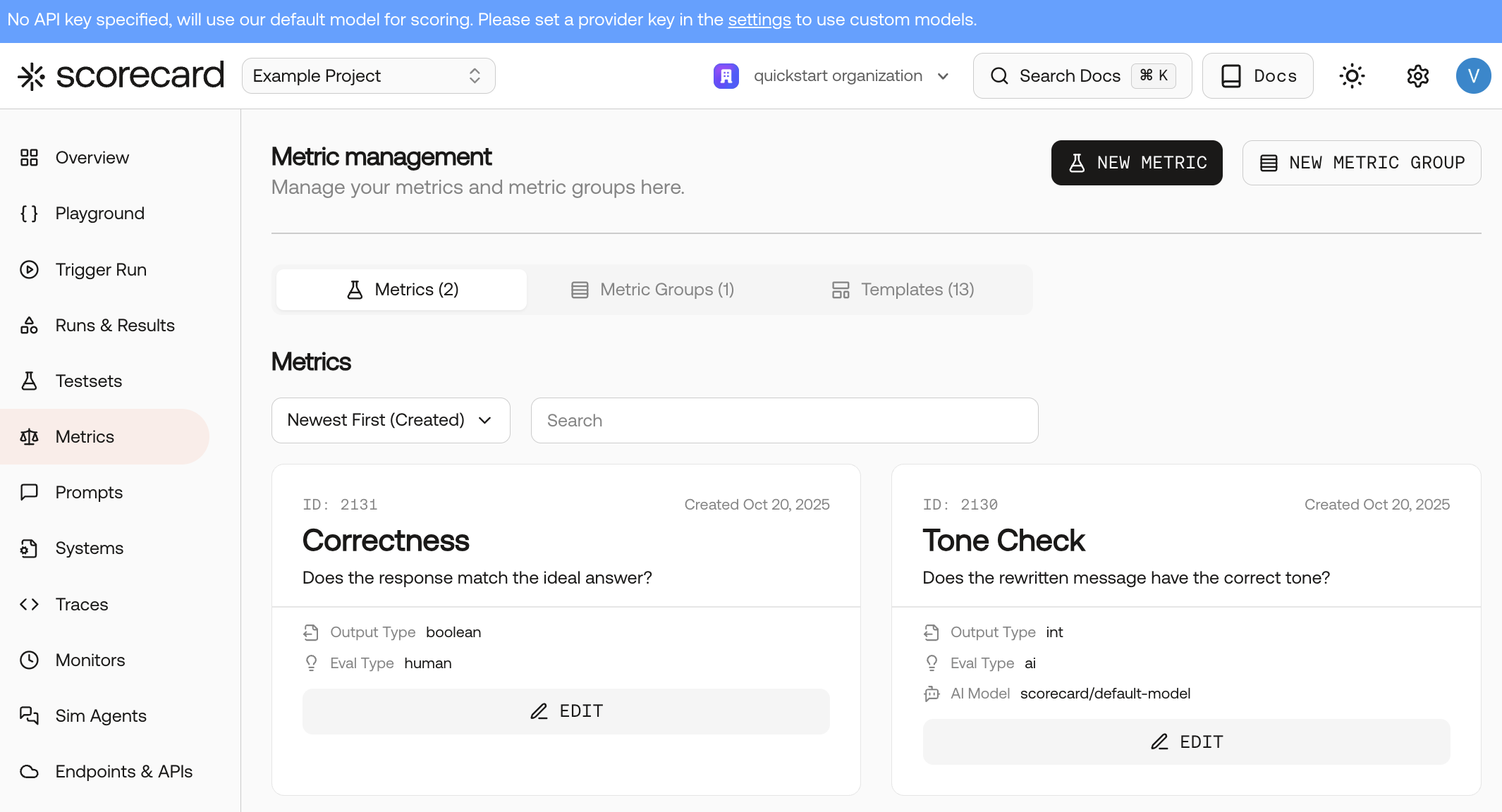

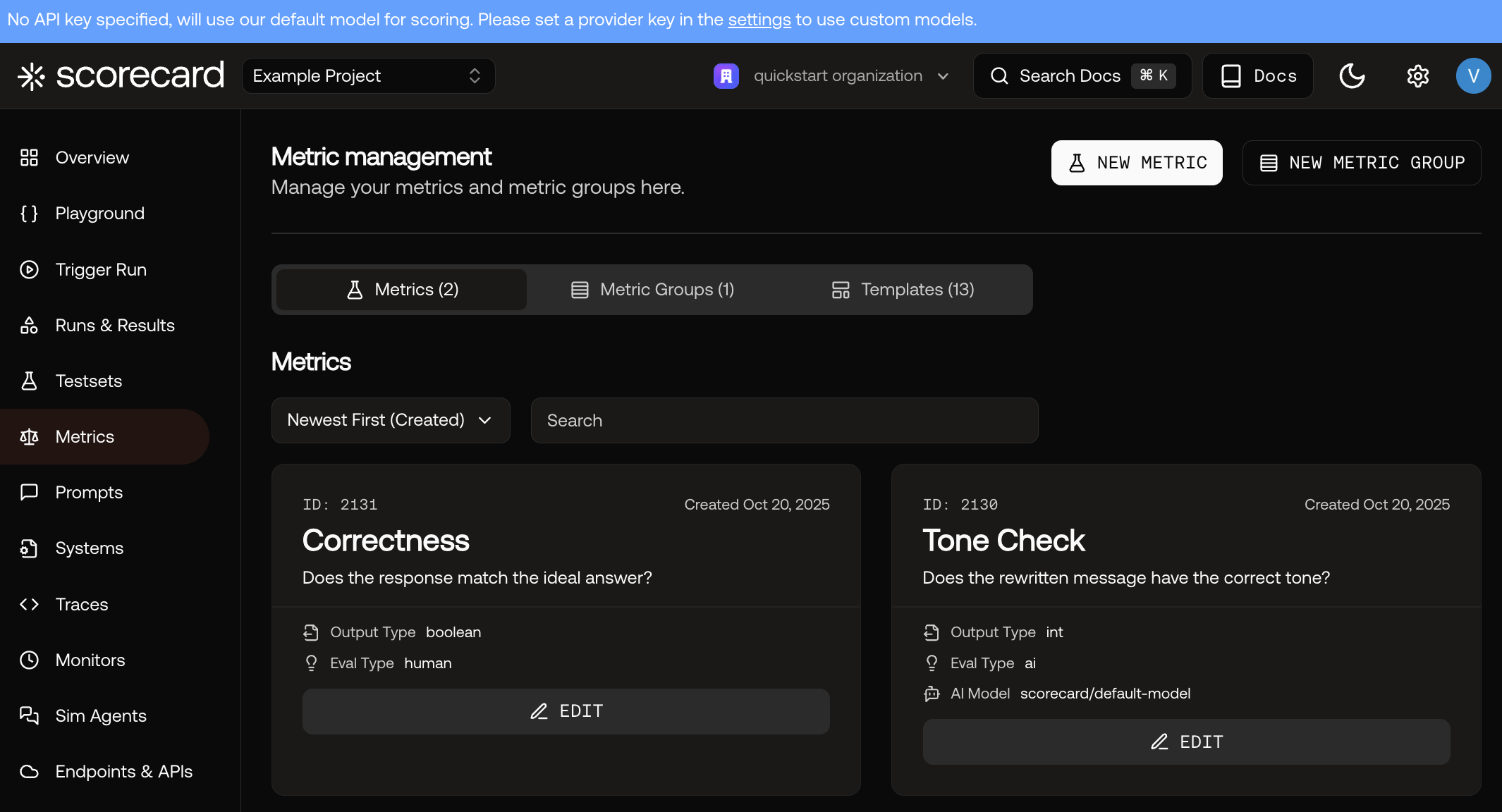

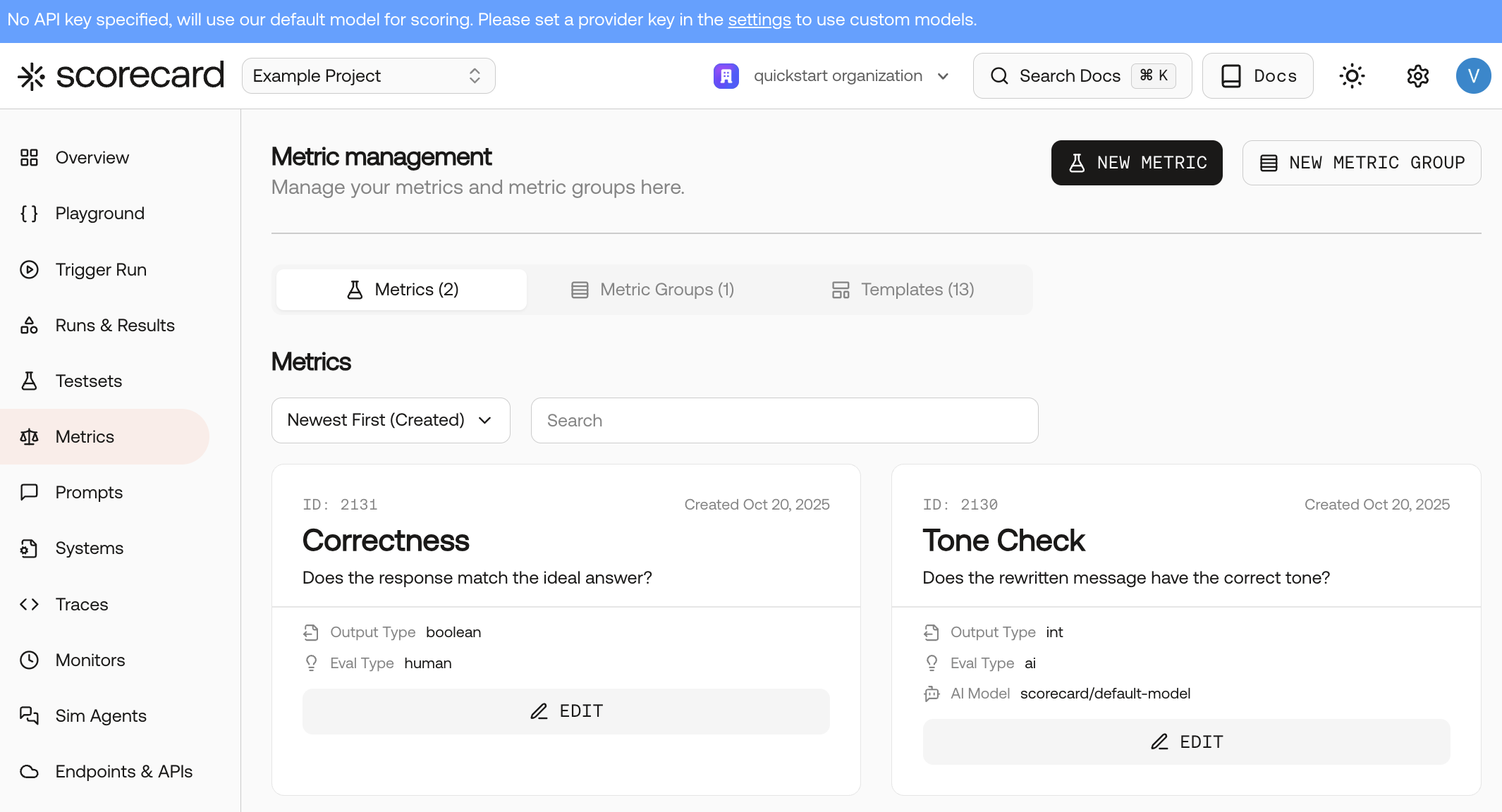

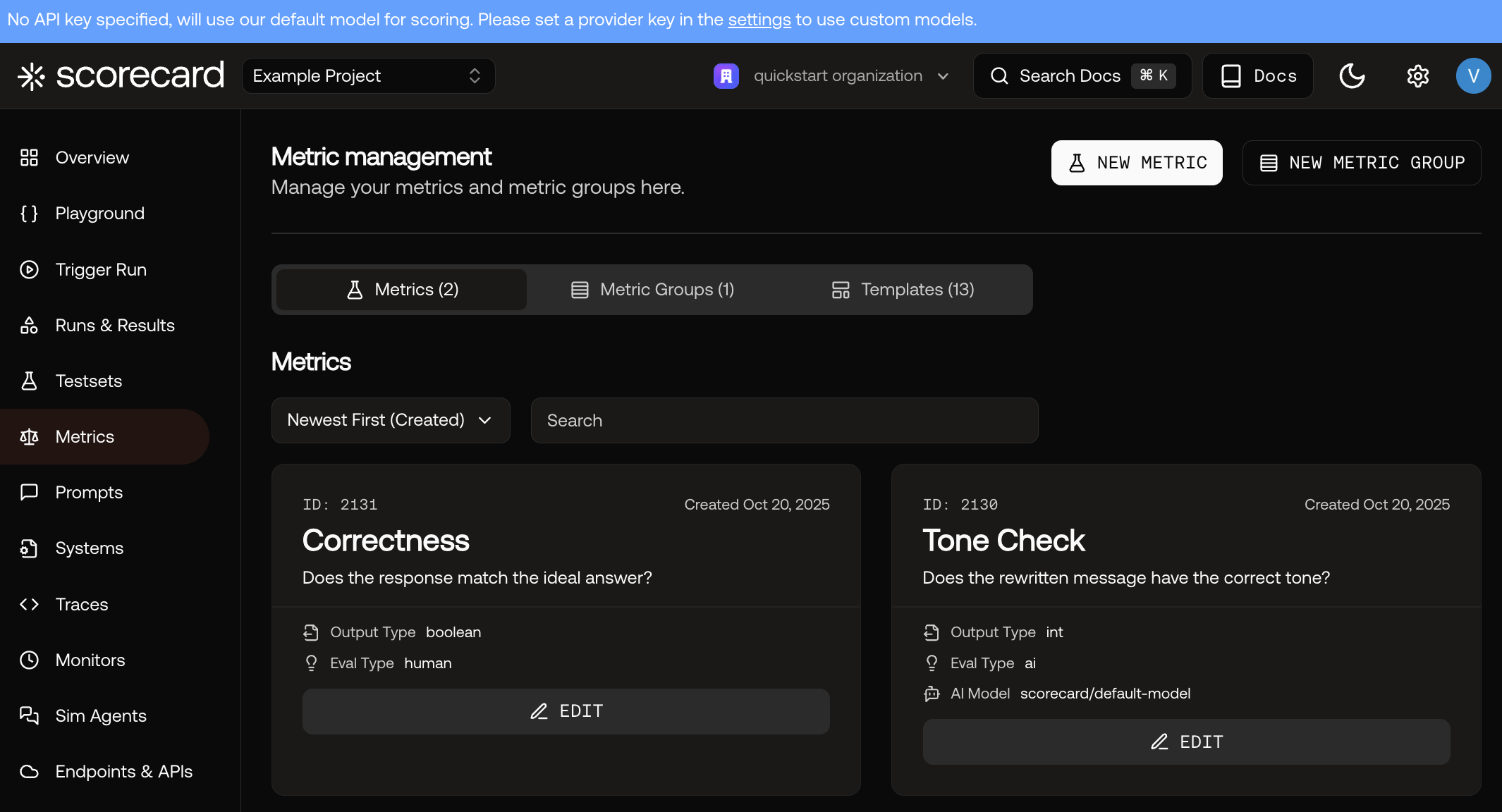

Finally, explore Metrics to learn how scoring works. Each metric has guidelines, evaluation type, and output type.

Finally, explore Metrics to learn how scoring works. Each metric has guidelines, evaluation type, and output type.

- Tone Testset: inputs

original,tone→ expectedidealRewritten. - Prompt versions for Tone — already set to Scorecard Cloud with low temperature for consistency.

- Metrics: Correctness (AI, 1-5), Human Tone Check (Human, Boolean).

Where to go next

- Read about creating and managing Testsets in Testsets

- Dive deeper into running evaluations in Runs & Results

- Explore interactive prompt iteration in the Playground

- Define and reuse evaluation criteria with Metrics